Pressure Image Forming

Pose estimation using deep learning

Pose estimation is a well-established task in Computer Vision [1, 24, 29, 34, 42, 43, 47]. Parametric models [30, 36] like SMPL simplify the representation of the human body,

forming the dense 3D mesh from only a small set of parameters for body shape and joint angles.

While conventional methods [23, 25] use 3D ground truth data for supervision, recent efforts have explored geometric cues and multi-view consistency to predict body mesh from RGB images [5, 22, 45]. Gong et al. [12] use multiple 2D modalities to predict the SMPL body and employ self-correction between the input and 2D projections to train their model. Inspired by this line of research in pose estimation, we develop our method, BodyMAP-WS to learn 3D pressure map without direct supervision. This approach alleviates the need for comprehensive ground truth annotations, facilitating data collection, and training on large unlabeled datasets.

In-bed human pose estimation

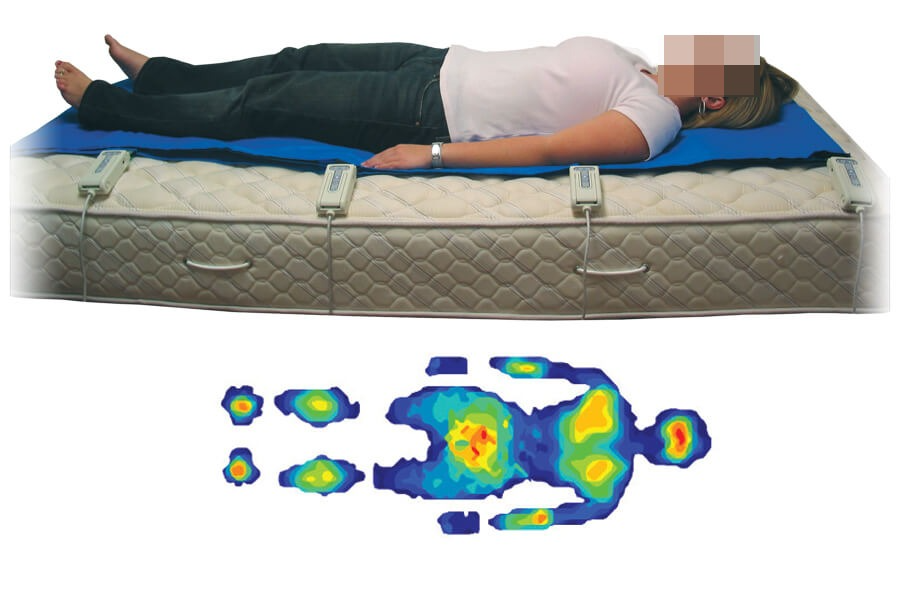

In-bed human poses present distinct challenges compared to active poses observed in sports activities. Heavy occlusion caused by blankets and self-occlusion present complexities. Additionally, there is limited body visibility in modalities such as pressure images which fail to capture limbs that are not in contact with the mattress. Datasets like the SLP dataset [26, 28] featuring real-world human participants and the synthetic BodyPressureSD dataset [9], which we leverage in this study, have propelled research in this domain.

Yin et al. [48] introduce pyramid fusion, incorporating modalities like RGB, pressure, depth, and IR images. However, their design necessitates multiple model passes to derive the final predicted body mesh. Considering potential clinical deployments and privacy concerns, we move away from using RGB images in our work. Clever et al. propose methods [8, 9] involving multiple models to estimate the body mesh. These approaches only rely on a single modality (pressure image and depth respectively). Our method, BodyMAP, simplifies this process by employing a single pass through a single model to jointly predict both the bodymesh and applied pressure map, while utilizing multiple visual modalities as input. Our findings demonstrate that our streamlined approach significantly improves performance over prior methodologies.

3D applied pressure map prediction

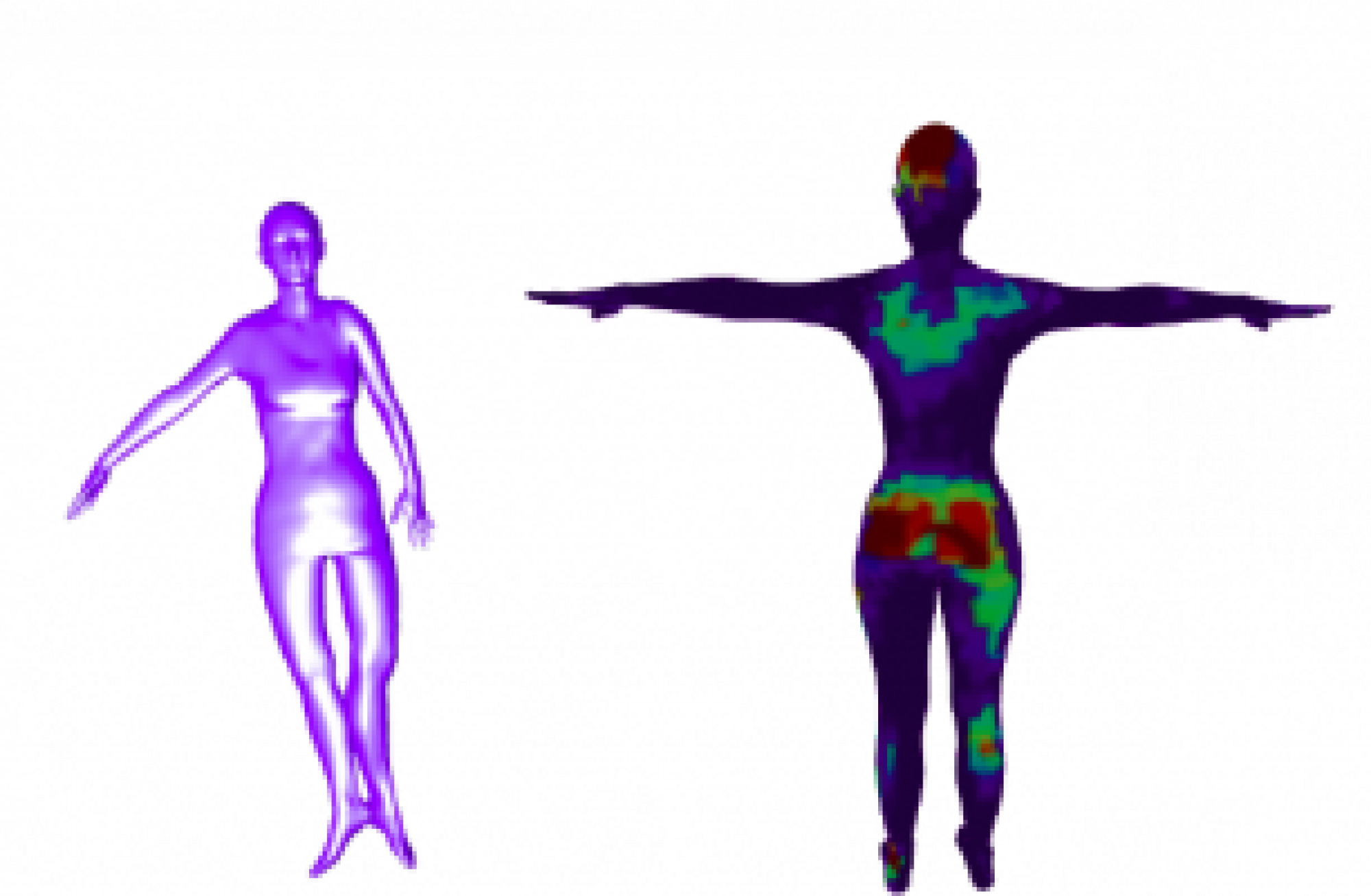

Prior techniques [9, 27] have explored the task of predicting 2D pressure images from alternative modalities like depth and RGB images respectively, offering a cost-effective means of obtaining information about applied pressure. However, as illustrated in Fig. 2, these 2D pressure images fail to localize peak pressure regions on the body.

In the related domain of pain monitoring, 3D pain drawings have emerged to be more effective visualization tools than their 2D counterparts [11, 41] in analyzing body pain.

In this work, we expand on this concept by directly predicting the 3D applied pressure map onto a human body mesh, overcoming the limitations of 2D pressure images in accurately pinpointing body regions under high pressure. Only a few prior methods explore predicting pressure onto the human body. Wang et al. [46] predict pressure applied during cloth interaction, only on specific body regions such as arms. Clever et al. [9] proposed the concept of full body 3D pressure maps. The authors developed a multimodel pipeline trained in multiple stages, to predict both the body mesh and 2D pressure image from a depth image.

Finally, they approximate the 3D pressure map by vertically projecting the 2D pressure image over the estimated 3D human body HC: but without supervision or rigorous analysis. While a multi-model pipeline can help break down this regression problem, we note that errors from each model often compound, affecting predictions of 3D pressure maps. In contrast, our approach goes beyond these methods by devising a unified model architecture, BodyMAP, to estimate the body mesh and pressure map jointly. Our model offers per-vertex 3D pressure distribution and leverages a PointNet module [37] for pressure map prediction across the entire human body.