Problem Formulation

During Spring 2023, we focused on human motion generative model with increased diversity.

- Goal: Text-driven human motion synthesis

- Input: Text specifying the human motion

- Output: Synthesized 3D human motion matching the input text

During Fall 2023, we extended our problem to human motion synthesis with human-object interaction.

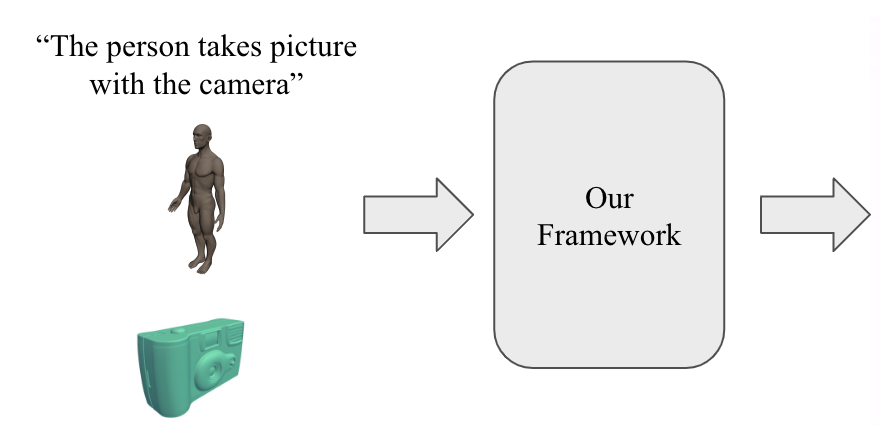

- Goal: Text-driven human motion synthesis with human-object interaction.

- Input: Text specifying the interaction between hand and object, initial body pose, initial object mesh

- Output: Synthesized 3D human-object interaction motion matching the input text

Why Challenging?

Building a text-driven human motion synthesis model is a challenging task due to the high degree of freedom involved in human motion, ambiguity of natural language input in text-to-motion synthesis, and difficulty in achieving natural-looking human motions.

Additionally, incorporating human-object interaction is particularly challenging because humans engage with objects in a highly diverse and detailed manner. Also, human hands can manipulate objects in intricate ways, requiring precise modeling of dexterous movements and the nuanced physical interactions between the hand and the object.

Our Work Summary

MotionGPT (Spring 2023)

We proposes MotionGPT, a doubly text-conditional motion diffusion model, to address the limitations of previous text-based human motion generation methods by utilizing the extensive semantic information available in large language models (LLMs). We also enforce the physical constraint in an end-to-end differentiable manner to synthesize more natural human motion. Our method achieves new state-of-the-art quantitative results in terms of FID and motion diversity metrics. Furthermore, it has strong qualitative performance, producing natural results.

Human-Object Interaction (Fall 2024)

We introduce a novel diffusion-based framework to synthesize whole-body human hand-object interactions conditioned on text input. Our framework integrates dynamic contact region prediction, ensuring correct contact between the human hand and object. Quantitative results show that our framework consistently achieves high-quality motion performance in both seen and novel action scenarios.