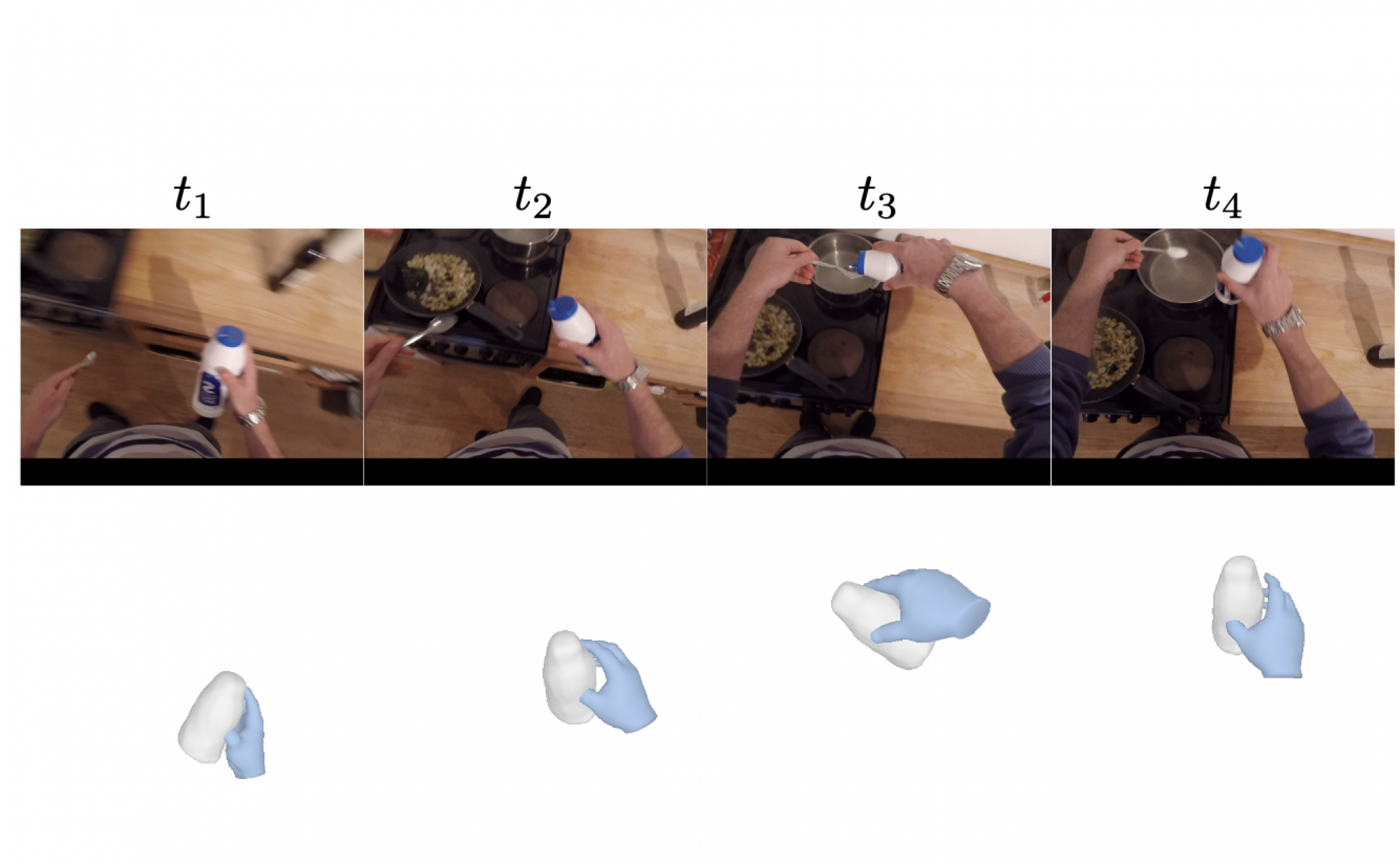

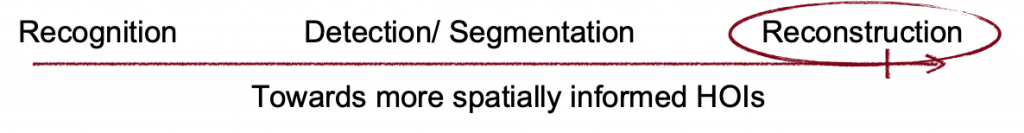

Hand-Object interaction has been a long-standing problem over years. As the field progresses, it becomes more and more spatially informed.

People initially formulated it as a recognition problem that each frame is classified into a verb-noun pair, e.g. playing golf, holding cup. Later, people wanted to think about where the action happens as well. For example, there have been many works that localize certain actions to the 2D space, either by predicting bounding boxes, or by predicting per-pixel segmentation masks. However, the actual hand-object interactions happen in the phyiscal 3D world. For example, given a picture of a human holding a cup, not only we are curious about which pixels corresponds to that action, We’d like to understand how we place our fingers around the cup surface in order hold it up.

Prior work for reconstruction of Hand Object Interactions can be categorized into 4 parts:

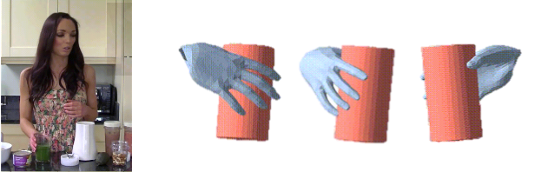

1. Human Object Interactions from Images: Reconstructing hand-held objects is difficult due to occlusion and lack of annotated data. Approaches can be divided into those that use templates and those that don’t. Early work assumed knowledge of object templates and reduced reconstruction to a pose estimation problem, using implicit feature fusion or explicit constraints. Recent work like IHOI uses data-driven priors from large datasets, but it is difficult to generate a consistent 3D shape across frames. While they are able to generate reasonable per frame predictions, it is not trivial to aggregate information from multiple views in one sequence and generate a time-consistent 3D shape. Our aim is to aggregate information across frames and ensure that the shape of a generic object is consistent throughout.

2. Hand-object interactions from Videos: Many prior works for articulated 3D shape reconstruction from videos often rely on multi-vew camera capture. While recent works like have shown promising results in reconstructing objects from monocular videos, they assume prior knowledge of the object’s template. They exploit all available related data (bounding boxes, hand keypoints, instance masks, 3D object models etc) to provide constraints for the reconstruction. Recent research has focused on reconstructing generic scenes from monocular videos without a template. In Meta’s work, they optimize object shape and pose trajectory for smaller segments and merge them by aligning poses estimated at overlapping frames. HHOR uses multiple views of objects provided by hand motion. However, these methods require carefully calibrated cameras and complete view coverage. Current research aims to reconstruct objects from everyday in-the-wild monocular clips without relying on prior knowledge of the object’s template or calibrated cameras and coverage of all views.

3. Neural Implicit Fields for Dynamic Scenes: Given the dynamic nature of the poses and articulation, reconstruction of HOIs could be challenging. Recent research has explored dynamic scene reconstruction using NeRFs, with D-NeRF and NerFie aiming to achieve temporal consistency. Banmo, LASR, and Magic Pruning are deep learning-based methods that focus on reconstructing deformable objects like animals. While Banmo estimates camera pose using bundle adjustment, LASR uses neural implicit representations to model surface deformation, and Magic Pruning introduces a pruning step to remove redundant features. Although these methods perform quite well in reconstructing dynamic general objects, we specifically focus and aim to get better reconstructions of particular scenes that involve a hand object interaction.

4. Diffusion Models: Generative models have shown great potential in text-to-image synthesis tasks and guiding 3D models based on text prompts. View conditioned diffusion models such as Dream Fusion, Jacobian Chain Products, and Magic3D have demonstrated progress in text-to-3D reconstruction tasks. While these works focus on generating 3D models from textual descriptions, other approaches such as NeRDi and RealFusion focus on 3D reconstruction from images. These methods rely heavily on RGB information to obtain novel views of the object. In contrast, we rely on geometry-based information to reconstruct 3D models, which can be beneficial in scenarios where RGB information is inadequate. We leverage surface normals, depth, and semantic masks from novel views to improve the quality of 3D object reconstruction.