Overview

Given a collection of bokeh images of a static scene (shown left), how would we render a new image with a different bokeh setting from the same viewpoint (shown right)?

We answer this common problem in the domain of post-capture image processing through our capstone project. Through this work, we enable re-photography using a neural rendering approach.

Motivation

People often capture interesting snapshots of their lives using their smartphones and digital cameras. In the spur of the moment, we might take multiple images focusing on some parts of the scene but later wished that we captured an image focusing on some other part of the scene.

Our project aims to bridge this gap by modeling a subset of camera settings such that we can render images with novel camera settings (focus and aperture).

Problem Statement

Given a bunch of images captured using varying camera settings (focus and aperture), we want to generate images with new values of those settings that still appear photorealistic.

Approach

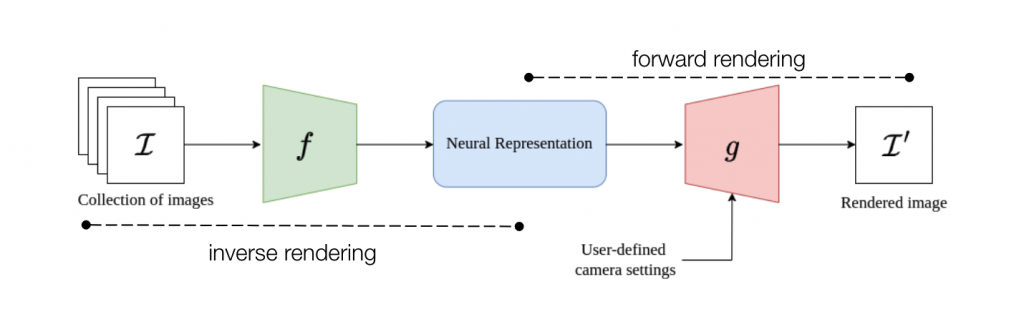

We first break this problem into two parts – inverse rendering and forward rendering.

Inverse rendering

From a collection of images given as input, a neural representation of the scene is built using state-of-the-art approaches in neural rendering. We chose to experiment with two scene representations – Multi-Plane Images (MPI), a 2.5D representation of the world, and Neural Radiance Fields (NeRF), a continuous 3D representation of the world. Both approaches have their advantages and drawbacks which are explained in the Approach section.

Forward rendering

New images of a scene for a new set of camera settings can be generated from the neural scene representation. In the MPI representation, this can be achieved through multi-ray sampling. In the NeRF setting, volume rendering can be used to generate a new scene image. It is to be noted that in both representations, a thin-lens-based formulation is integrated into the rendering pipelines such that photorealistic defocus effects can be generated.

Our code is available at https://github.com/nitchith/Neural-Rephotography