Important Links

Final Presentation slides

https://docs.google.com/presentation/d/1GP6HleKgcuyU526DFePd4tt4jYadcrqk7erMavflU8E/edit?usp=sharing

Final Presentation video

https://drive.google.com/file/d/1gTfLjv6dU0m8QbaHzJsbSocp_BgwPlqR/view?usp=sharing

Preface:

During the Fall semester, our path had been filled with lots of twists and turns. We discarded the wheel odometry based approach, because that was already a kind of solved problem, and sponsors weren’t very interested in us pursuing it. Secondly, we saw a lot of interest from our audience in Semantic map based relocalization experiment.

Relocalization using semantic maps:

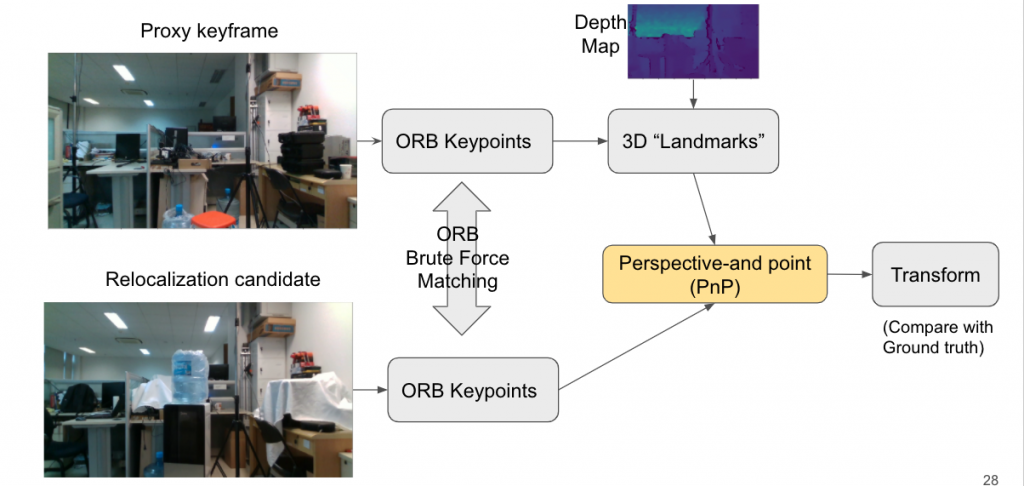

We pursued relocalization experiment, to polish it more, and provided in depth qualitative and quantitative analysis of the use of semantic maps for pose estimation. The figure below describes

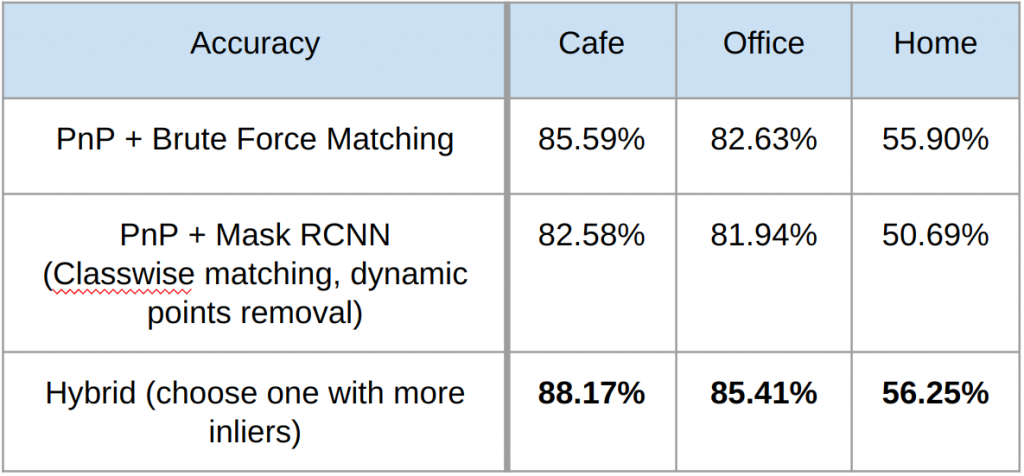

We concluded that semantic maps weren’t very helpful, primarily because most of the objects in an indoor environment are often out of distribution for the limited category of SOTA object detectors, and hence they are classified into background. Even though a hybrid of brute-force matching and semantic map based matching could outperform brute-force approach, the improvement was marginal. Thus we began exploring object agnostic approach

Depth-based Pruning

Now we discuss the idea we explored. Given that we have following constraints:

a) our current frame will be dense RGBD

b) but the keypoints we inserted in the map are sparse.

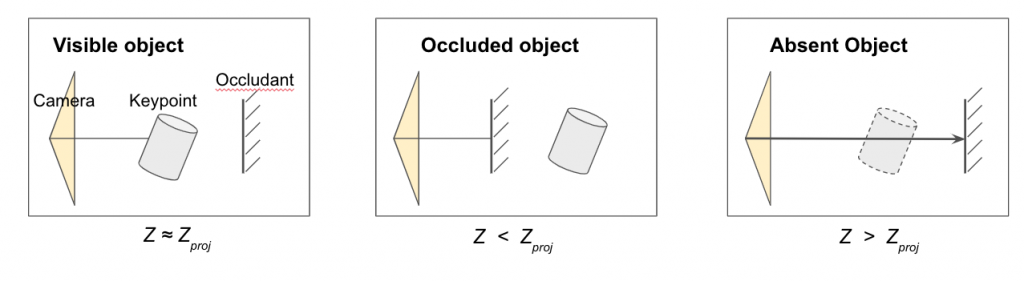

So after we do a first pass, and say we relocalize correctly in second pass, then we want to use dense depth of current frame for map maintenance. If the old keypoint is in front of the current depth-map, then we say that the keypoint belonged to an object which is no longer there, so it should be removed. This is shown in the figure below:

Platform and Dataset

We shifted to ORBSLAM3 because its multi-map setting looks very relevant for long term SLAM scenarios. However the code was just released so it was still a bit buggy which created many problems. Another part where we struggled a lot was collecting our own dataset. We tried various sensors and setups in the home, but due to the problems like noisy depth, variable lighting, textureless walls, no way of measuring GT trajectory, we weren’t able to collect a reliable dataset for long term SLAM. Hence, we decided to finally shift to Facebook Replica, a synthetic dataset which allows nagivation in 6 scans of an apartment, each with low dynamic changes.

Evaluation on Synthetic Dataset

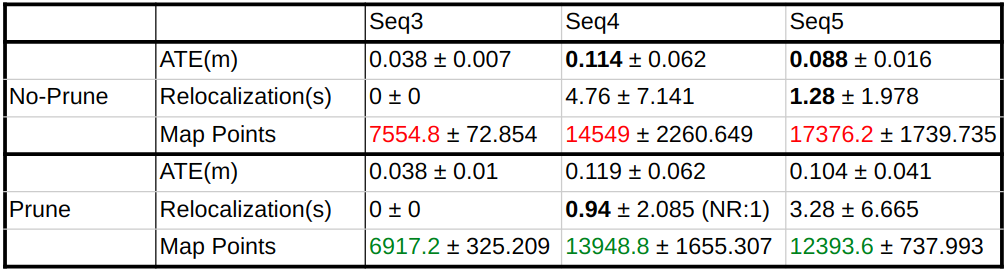

For evaluation, firstly we had to align the different meshes of each scene using Global Point Cloud registration followed by ICP. Then we had to align the axes of the ORBSLAM3 with Facebook Replica. Another interesting part is that facebook replica has camera fixed at 180° to the front direction. Adjusting for all these things, we evaluated the long term performance of our systems on consecutive sequences, using these 3 metrics:

1) Given we align the first sequence, we calculate trajectory error (ATE) for unaligned consecutive sequences.

2) For each new sequence we calculate the time taken for relocalization.

3) We also count the total number of new keypoints added after processing each sequence.

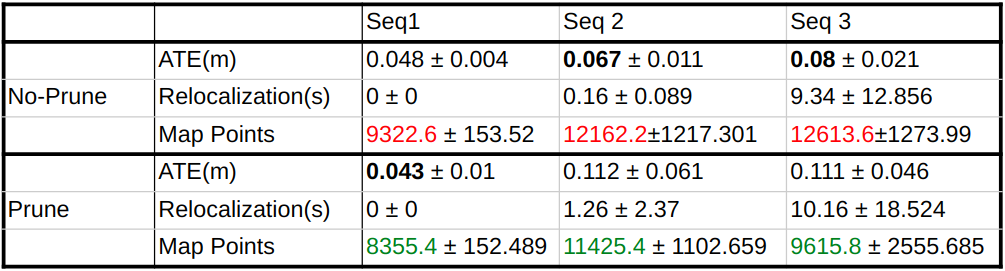

Our results on two sequences based on these metrics are given below:

As seen from the table, our final conclusion is that depth based pruning does limit the number of keypoints, but at the cost of higher ATE, so there is some scope of tuning the threshold parameter and also of trying some more robust approaches for making the decision of deletion of keypoint.