Links

Our project has been wrapped up into a publication. Code and paper could be found in our project page.

Summary – Fall 2023

In this semester:

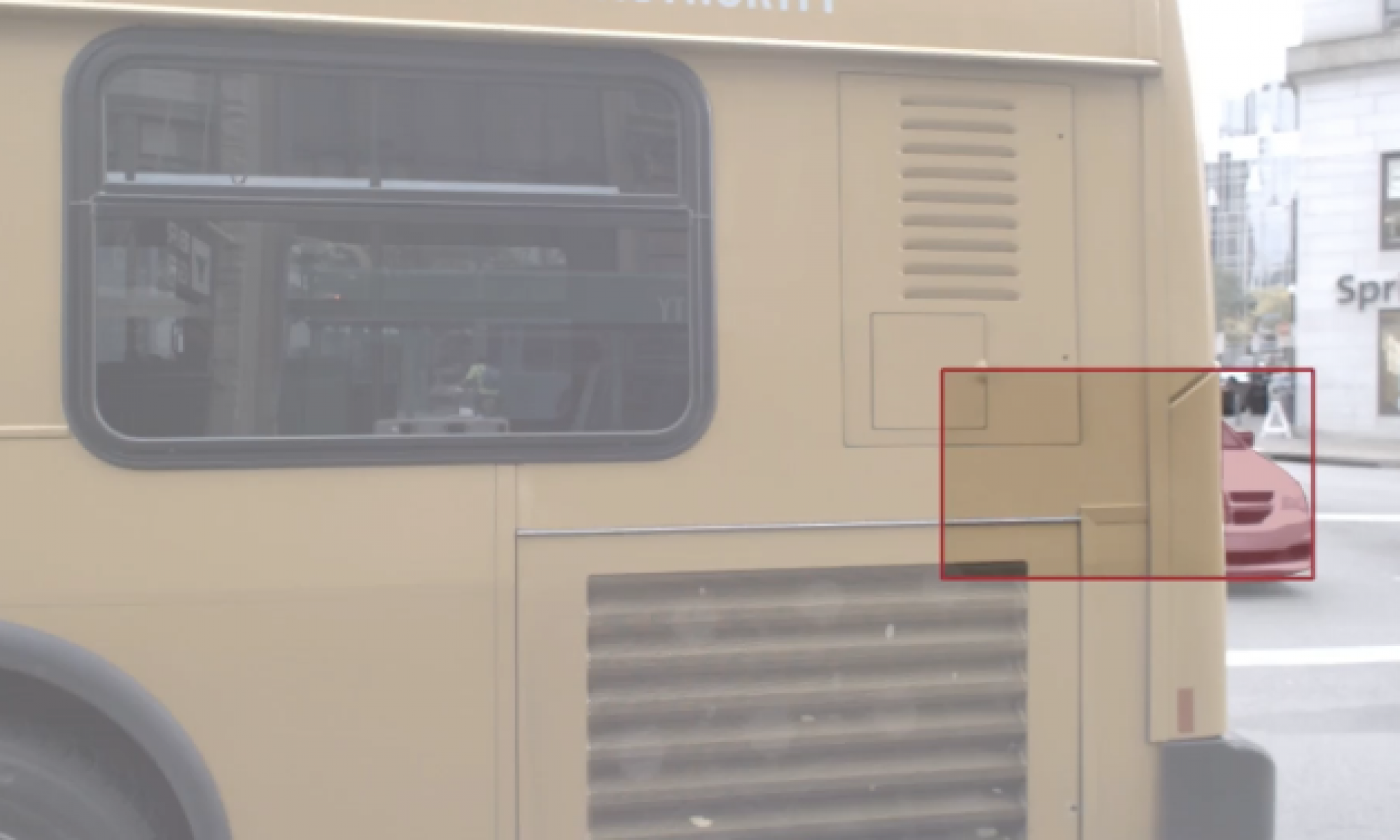

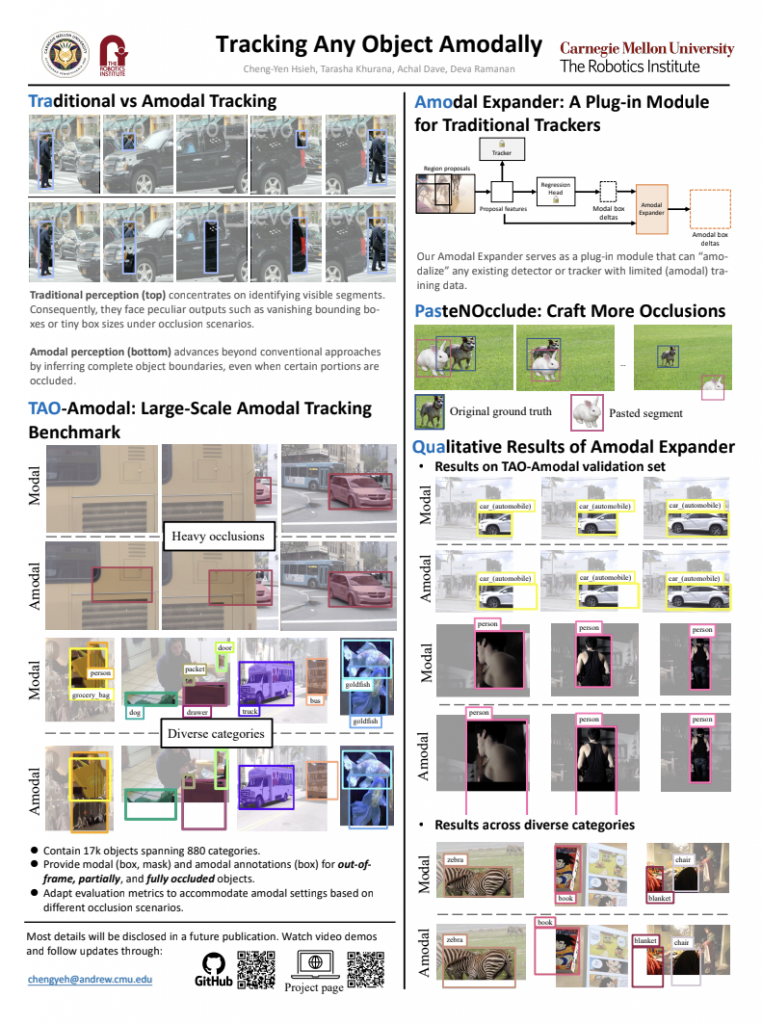

- We developed a lightweight plug-in module, the amodal expander, to transform standard, modal trackers into amodal ones through fine-tuning on a few hundred video sequences with data augmentation.

- We introduced PasteNOcclude, a data augmentation technicuqe for crafting occlusion scenarios, that benefits the model in perceiving occluded objects.

- Amodal Expander trained along with PasteNOcclude achieves a 3.3% and 1.6% improvement on the detection and tracking of occluded objects on TAO-Amodal. When evaluated on people, our method produces dramatic improvements of 2x compared to state-of-the-art modal baselines.

Summary

Summary – Spring 2023

In this semester, our progress can be categorized into three folds:

- Building the largest amodal object detection and tracking benchmark, TAO-Amodal dataset

- Extensive survey into amodal detection/tracking methods

- Proposed our amodal detection baseline