Experiment Setup

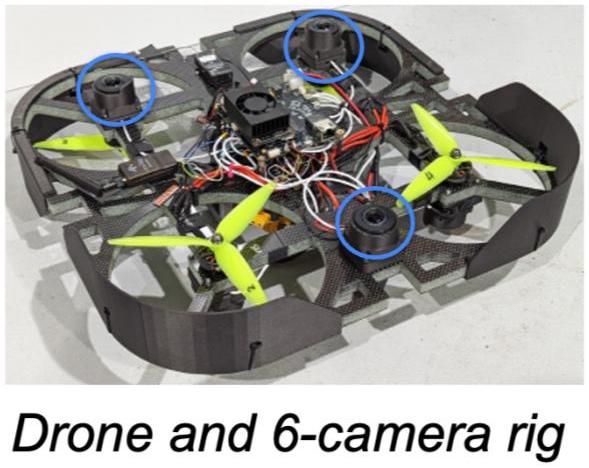

Our experiment setup involves a drone equipped with three fisheye cameras mounted on the top and additional three fisheye cameras mounted on the bottom.

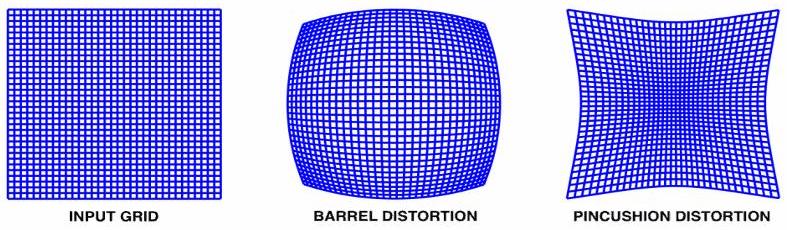

The fisheye lenses used in the experiment are known to have barrel (radial) distortion. They offer a wider field of view (FoV), providing access to more information. However, due to the distortion, useful information may be cramped on the edges of the captured images.

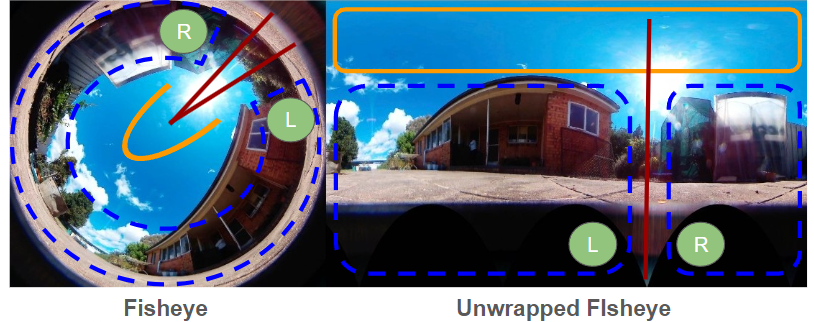

There are a few ways to deal with the high levels of distortion in fisheye space – one way is to transform the input fisheye into an equirectangular or a cube-map space. The most common equirectangular projection is done by unwrapping the fisheye along an axis (in red in the below image).

It can observed that the majority of the center of the fisheye maps to a small region on the unwrapped equirecntagular image (in the yellow line). The cramped edge regions from the input fisheye are mapped to the majority of the remainder of the image (in the dotted blue line).

Data Collection

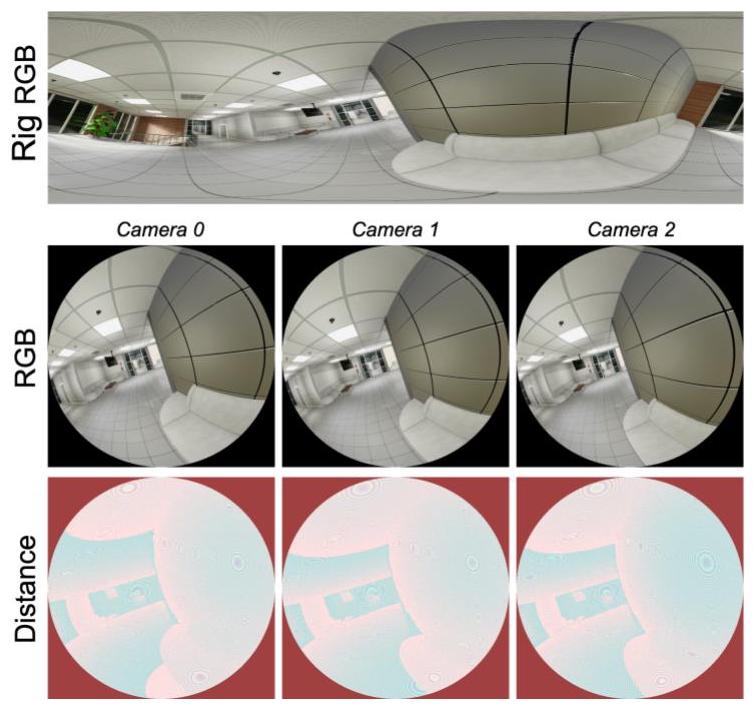

Dataset analysis: TartanAir dataset collected in Airsim simulator (running on Unreal Engine).

- 28 environments: ~45 videos; ~4500 valid frames

- Per frame samples: 3 fisheye camera RGBs, Distance GTs, and Rig RGBs