BACKGROUND INFORMATION

What is localization/state estimation?

Given a dynamic agent in an environment, one would want to estimate the location and orientation (i.e. pose) of the agent relative to an arbitrary global reference frame.

What is the exact meaning of pose?

Pose information can be represented as a pose vector p which is a combination of a 3D position x and orientation represented by a quaternion q giving us: p=[x, q]

Why is it needed?

Accurate pose estimation is needed for a wide range of applications including navigation, virtual reality, and augmented reality.

DATASETS

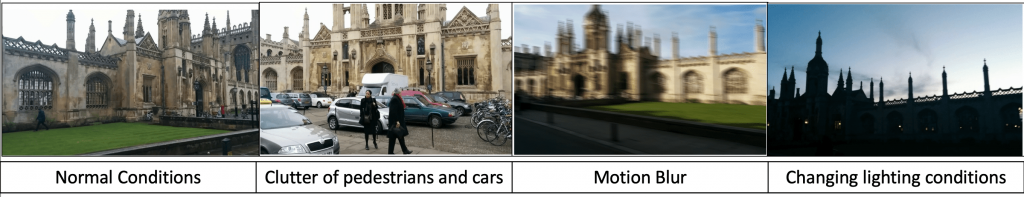

For the Visual odometry baseline, we used the King’s College Dataset which is a subset of the Cambridge Landmarks dataset. The dataset maps an RGB image with its ground truth pose i.e. 3D position and 4D orientation. The dataset is diverse in terms of motion blur, clutter of vehicles and people, and varying lighting conditions.

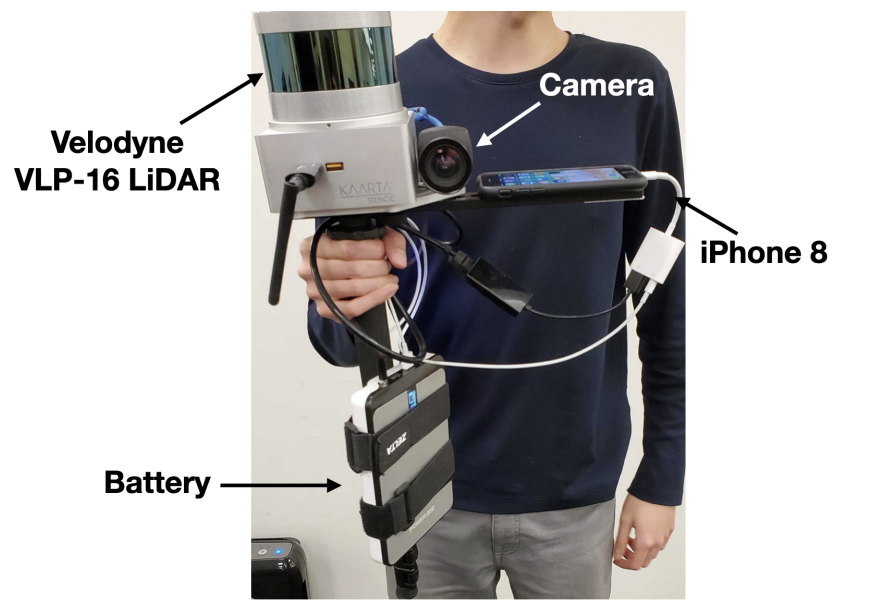

For the Inertial odometry baseline, we used the dataset created by the authors of Inertial Deep Orientation-Estimation and Localization (IDOL). The dataset maps IMU readings from the accelerometer, gyroscope and magnetometer sensors with the relative pose estimates.

SETUP FOR ARIA DATASET

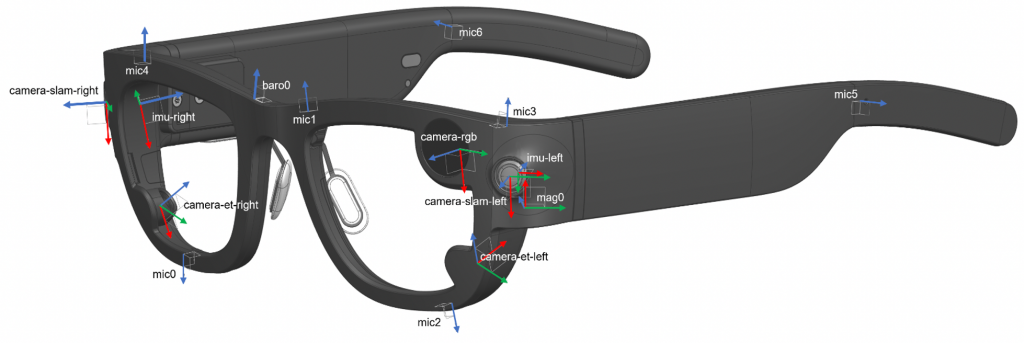

For our use case, we collect sample trajectories using the Aria glasses. The Aria glasses have built-in IMU sensors and RGB cameras and thus are a great setup for our use case.

To obtain the ground-truth pose estimates for our Visual-Inertial Odometry system we make use of the Aria Research Kit. Using this toolkit Project Aria’s Academic Research Partners can request Machine perception Services for the trajectory data and obtain 6-DOF pose estimates.

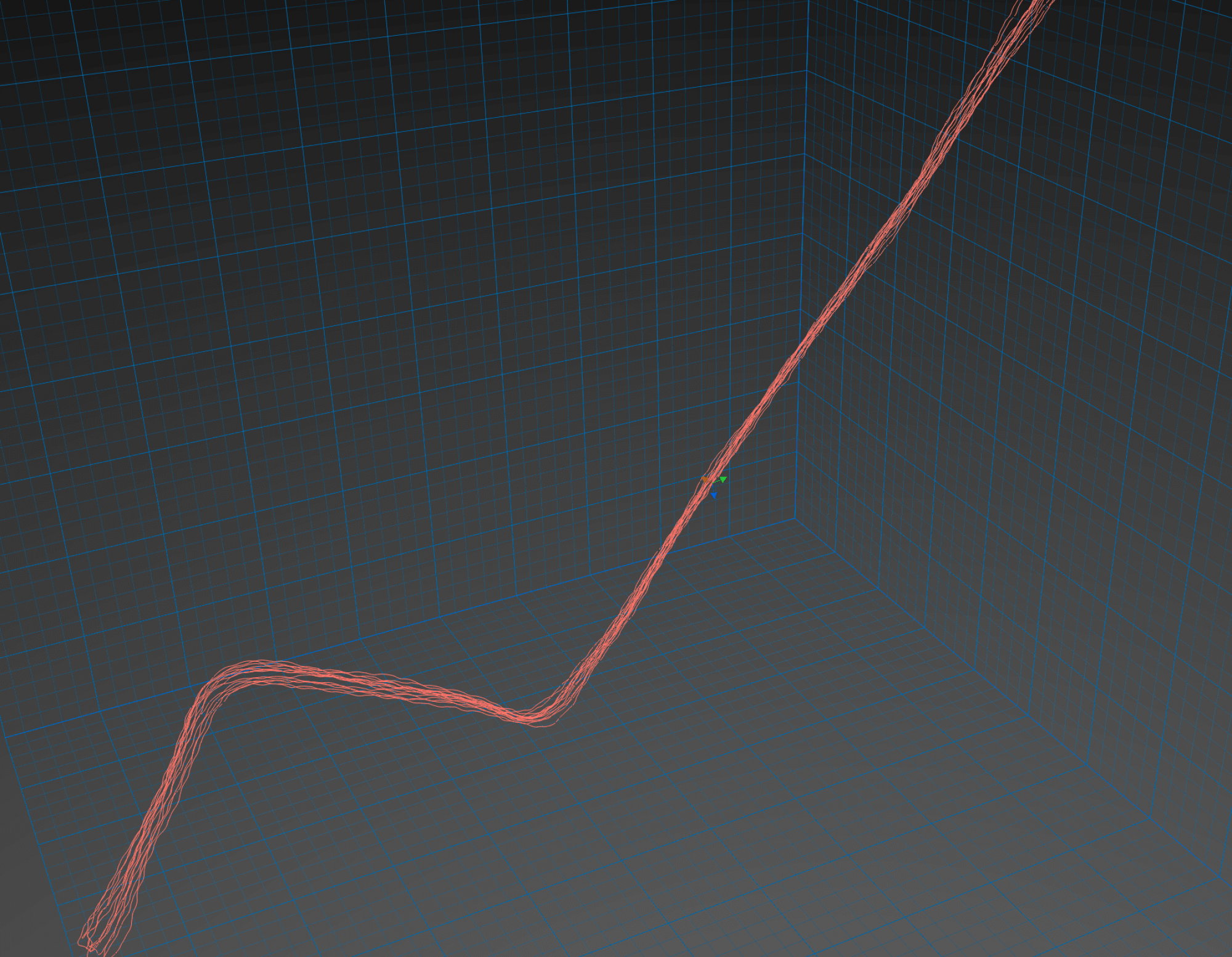

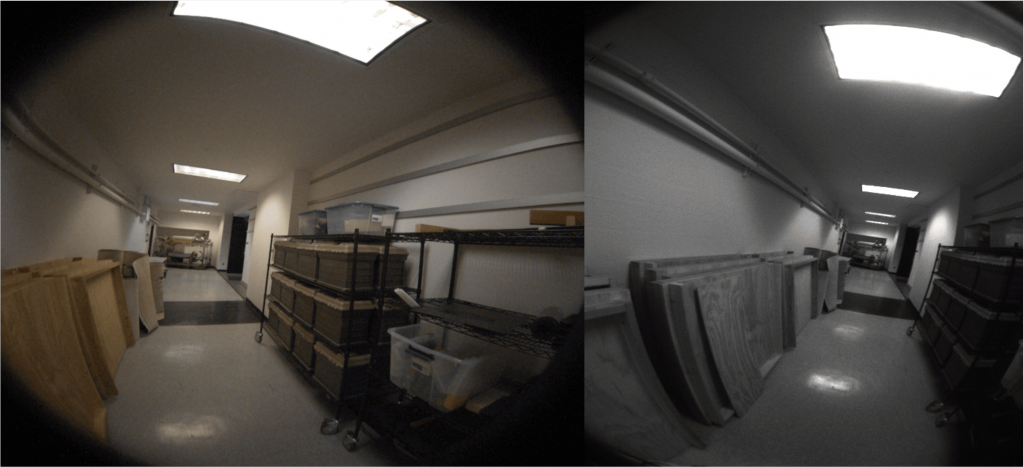

We use the toolkit developed by Meta in order to obtain the ground-truth pose estimates. The following image is a screenshot from the toolkit which shows the trajectory recorded in Smith Hall.