Visual Odometry

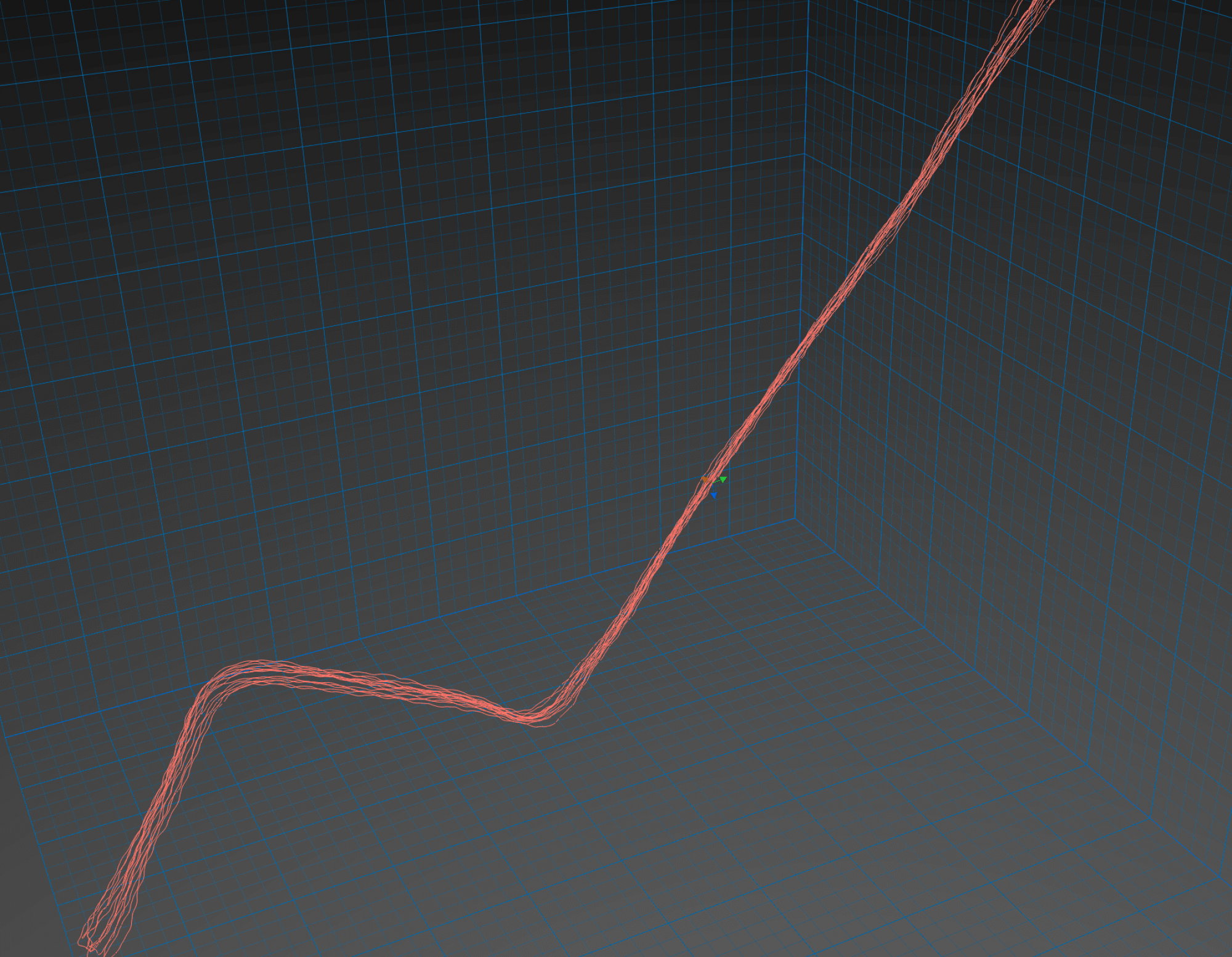

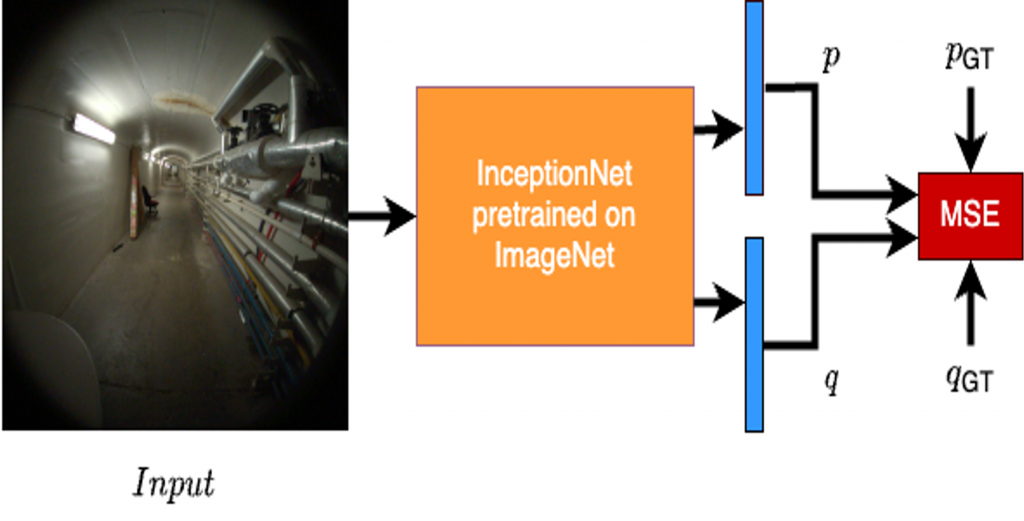

We use InceptionNet backbone with appropriate changes in the final fully-connected layers such that we can regress over the 7D pose i.e. position (x, y, z) and the quaternion rotation.

We train this model on a dataset collected in the basement of Smith Hall using Aria Glasses. For establishing our baseline, we have also tested our model on the King’s College Dataset in order to compare it against PoseNet implementation.

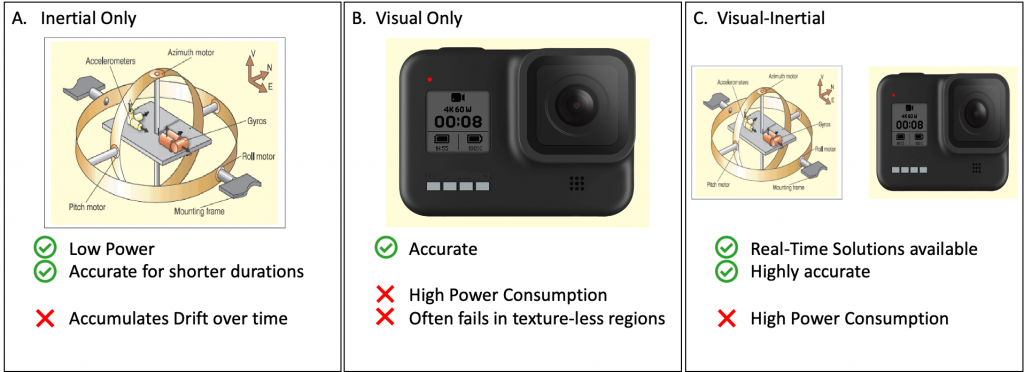

Using the RGB camera ensures that the predicted pose is quite accurate with the only downside of a higher power consumption due to the continuous camera frame input.

Inertial Odometry

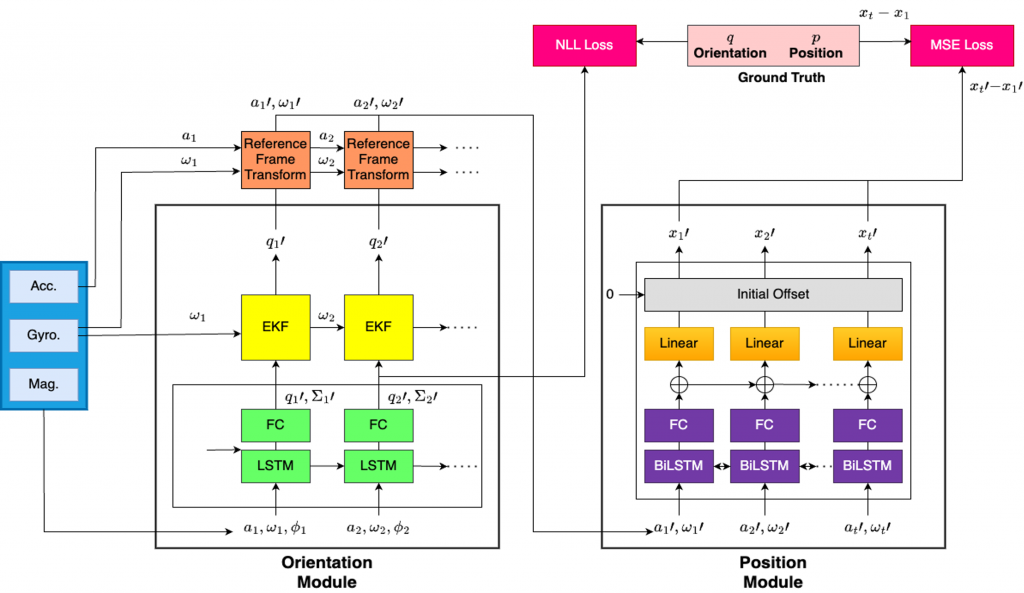

We use IDOL: Inertial Deep Orientation-Estimation and Localization architecture as our baseline. We have trained this model on the author’s dataset and also on our dataset collected using Aria glasses.

This model aims to estimate the pose using the sensor readings from the gyroscope, magnetometer, and accelerometer.

These sensors can operate using very low power consumption but they accumulate drift quickly over time. Hence, they are accurate only for a short duration.

Combined low-power Visual-Inertial Odometry

In order to find a balance between the pros and cons of the visual odometry system and the inertial odometry system, we combine the inertial odometry model with the visual odometry model. We use the visual odometry prediction after every kth timestep such that we can reset the inertial odometry system which has been collecting drift till this timestep.

We try to find the best possible ‘k’ value which strikes the balance between power consumption i.e. frequency of RGB frames used v/s the accuracy of the overall predictions.

Overview of the above methods