Abstract

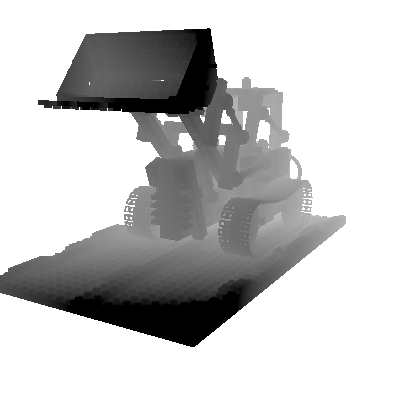

The defocus effect present in images captured by finite aperture cameras can serve as a depth cue for objects outside of the depth of field. In this project, we investigate the potential of defocus effect as a depth cue and propose an inverse rendering framework for depth estimation. Our focus is on microscopic scenes where the depth of field is narrow and defocus effect is prominent. We demonstrate that our method can recover a reasonable 3D representation of the scene, enabling the recovery of the depth map, synthetic refocusing, and generation of an all-in-focus image. Our framework does not require specialized hardware or rely on prior data, and is compatible with techniques such as multi-view stereo and photometric stereo.

Motivation

Recovering the shape of a scene/object has always been an interesting question, especially with monocular setting. The ability to use focal stack to acquire such information is even more interesting under certain scenarios, such as microscopy and photography. Most current approaches rely on learning prior from large datasets. We, on the other hand, want to focus on a single scene and formulate this as an inverse rendering problem and learn a 3D representation of the scene/object, which allows us to not only recover the depth information but also re-render the scene/object with novel visual effects.

Problem Statement

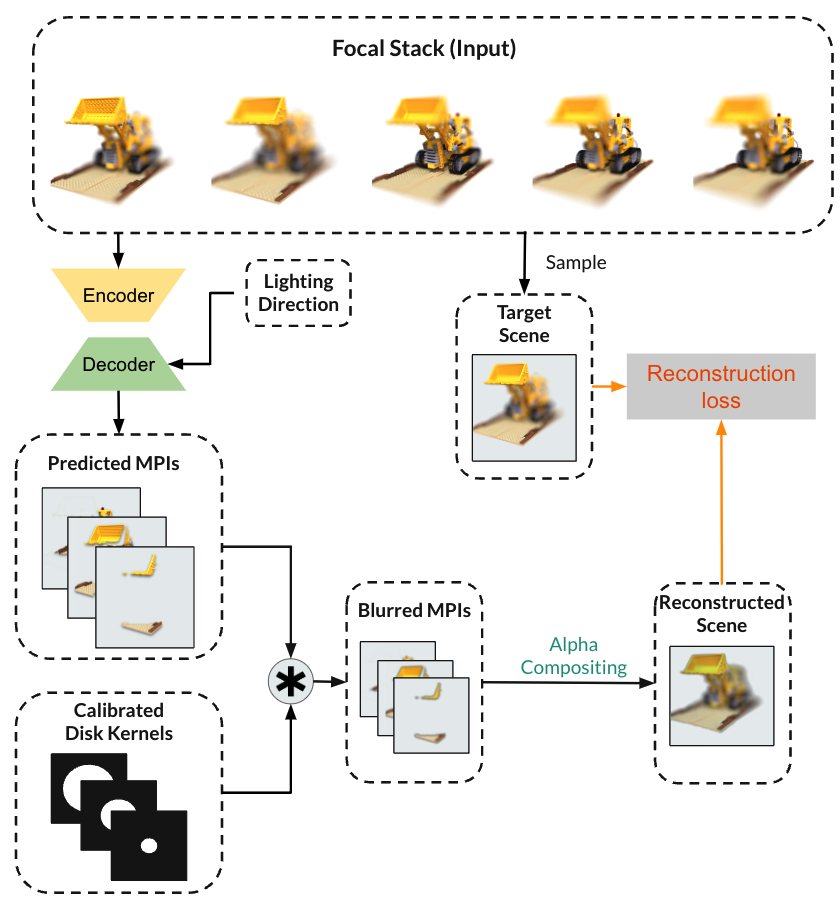

Given a focal stack, we want to learn a Multiplane Image representation of the scene/object and recover the depth map from it.

Approach

Our approach optimizes a neural network that predicts a multiplane image representation (MPI) for a specific scene. The reconstructed scene is acquired by applying a disk kernel with the corresponding MPI layers. We supervise the learning process by minimizing the reconstruction loss between the reconstructed scene and known target scene. More details of the approach, its limitations and potential improvements will be discussed in Approaches and Results.