Image Restoration

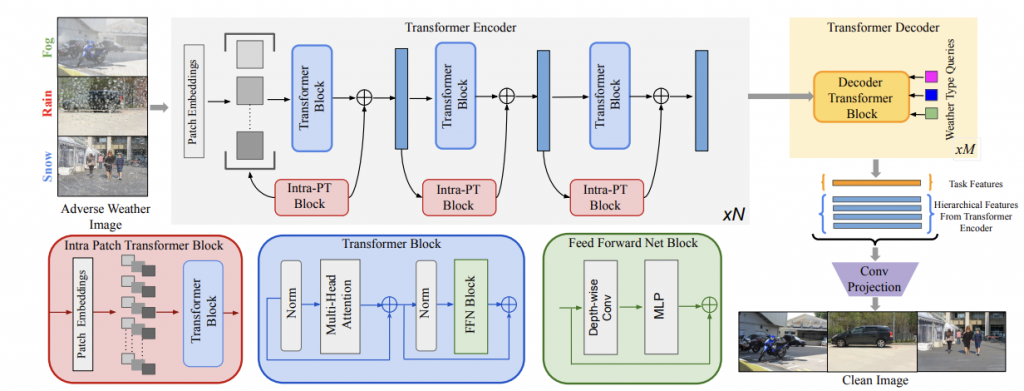

Our image restoration pipeline aims to take in a foggy image as input and produce a clear image as output. For this, we use a transformer based model, TransWeather [1].

TransWeather This model is built upon on a transformer architecture, taking in any weather-degraded image and outputting a weather-free image of the same scene. In addition to basic transformer blocks, intra-patch transformer blocks propagate patch-level features and information through the network. In theory, this model should be able to encompass all weather types, expanding upon previous works where unique encoder blocks are necessary. This is done so by increasing the number of parameters learned in the model. Furthermore, weather type queries are learned in the transformer decoders that attend to the weather type in the image.

Synthetic Image Generation

The image restoration network requires similarity score between a ground truth (clear) image and restored image of the same scene to assess the quality of the restoration. Thus, we require synthetic foggy images to train the restoration model. We have a synthetic fog generation pipeline for both day time and night time images which was applied on the BBD100k[2] dataset.

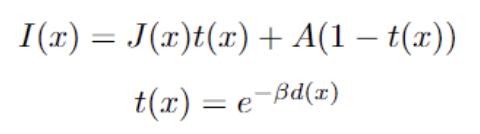

Synthetic fog generation follows the atmospheric scattering model [3]:

In the above equation, the foggy image I(x) is generated using the clear image J(x), transmission map t(x), atmospheric scattering A, scattering coefficient β, and depth map d(x). We apply varying values of β to the data.

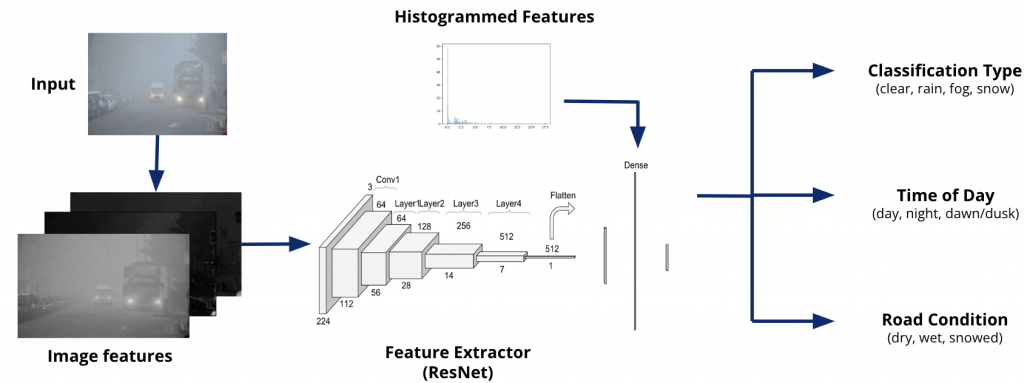

Weather Classification

The weather classification model is a multi-task classifier to determine not only the weather type, but also the time of day and road condition. The model itself uses a ResNet50 backbone with engineered features corresponding to saturation, dark channel prior, local contrast, and histograms of oriented gradients (HoG). These features, except for the latter, are directly computed and then passed to the backbone. The HoG feature is represented as a binned histogram and concatenated at the linear layers to form the predictions.

Resources

[1] Valanarasu, J. M. J., Yasarla, R., & Patel, V. M. “Transweather: Transformer-based restoration of images degraded by adverse weather conditions.” CVPR 2022

[2] Yu, X., et al. (2020). BDD100K: A Large-scale Diverse Driving Video Database. arXiv preprint arXiv:1805.04687.

[3] Narasimhan, Srinivasa G., and Shree K. Nayar. “Vision and the atmosphere.” International journal of computer vision 2002

[4] Godard, C., et al. (2019). Digging Into Self-Supervised Monocular Depth Estimation. In Proceedings of the IEEE International Conference on Computer Vision (ICCV).