Aggregated real-world data

The data used for weather classification must be aggregated according to the weather-type. Currently to our knowledge, there are no large-scale datasets taken from vehicles that contain weather type annotations. We are working to combine multiple datasets together for both downstream tasks. In order to improve on generalizability of the models, we include data from both on and off road bad weather conditions. At the moment, the DAWN and Multi Weather Image (MWI) Datasets are combined together for weather classification tasks and we hope to continue adding more datasets.

Synthetic data

To optimize the restoration model we require clear images without degradation along with weather degraded image of the same scene. Hence, we will have to take clear images from any on road dataset and generate weather degradations in it. Also, since we aim on performing evaluation of the restored images on downstream tasks, we require the ground truth annotations of these downstream tasks.

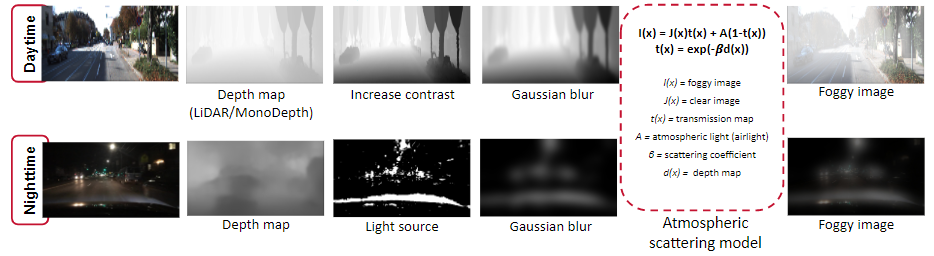

We use the BDD100k dataset to generate synthetic foggy images. The below image shows the overall pipeline to generate foggy images in day and night-time.

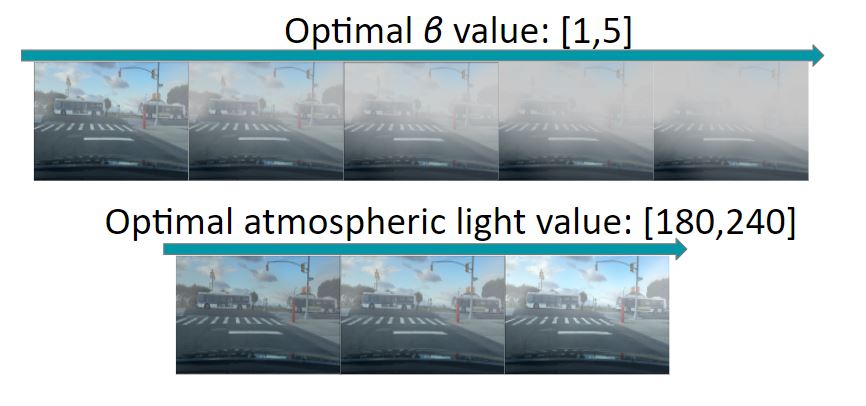

The depth map is first obtained for a given image using pre-trained depth estimation network like MonoDepth, else the LiDAR ground truth depth information is used if available. But, this obtained depth map lacks non-homogeneous attribute that would be present in real world fog. To combat this, the next step is to increase the contrast of the depth map to make the difference of fog with depth to be more pronounced. But, this comes with a problem of having distinct edges around objects which is unlike the real world fog and to avoid this we apply Gaussian blur on the depth map. We use this obtained depth map in the atmospheric scattering model and obtain a foggy image. For the β and A values with visually experimented with an entire range of values and finally came up with a restricted optimal range of 1 to 5 for β and 180 to 240 for A that looked close to real fog.

For night time, the same process as day time fog generation is followed for depth map creation and the same range of fog density is used. The difference here from in the atmospheric lighting value(A). This is done because in real world night-time fog, the fog is visible around the areas of light source only and the rest of the area is dark. To incorporate this we aim to have a non-homogenous A, instead of a single scalar value. For this we use segmentation to obtain a threshold map that indicates the regions with light sources in the image. The areas with light have A in the optimal range and the other areas have A set to 255.

Weather Classification Model

Our weather classification is designed for three purposes: (1) to segment out different weather types to be sent to distinct downstream restoration models, (2) distinguish what time of day it is, and (3) to determine the road’s condition. The road condition is independent of weather type in order to encode whether the road is slick, independent of whether it is caused by rain or fog in order to better inform driving behavior.

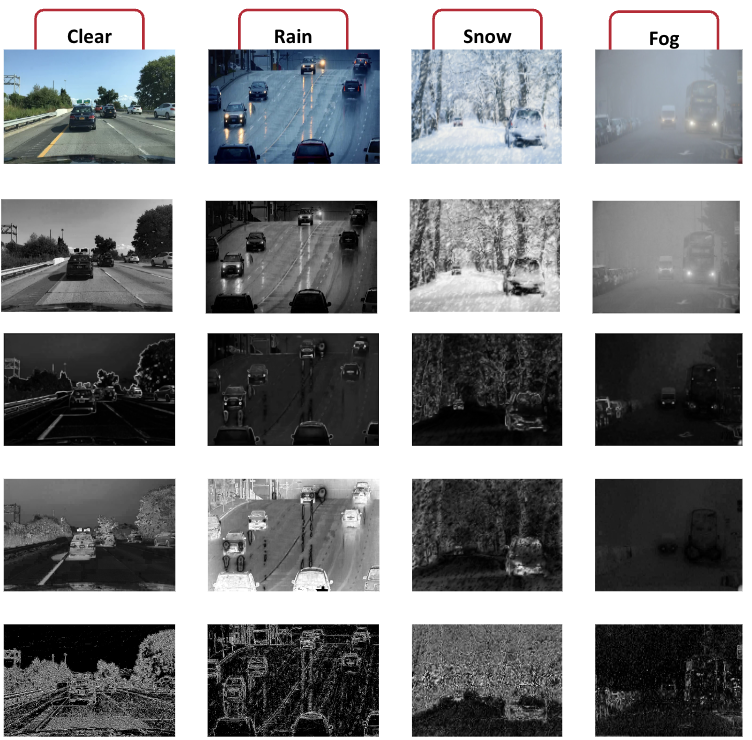

Engineered features corresponding to physics-based properties of bad weather are first computed and then sent into the backbone model. Through experimentation, we found that this worked better than sending the image itself through the model and allowing for the model to learn these features.

In order to perform road type classification, we also had to perform road segmentation prior to passing through the model. To do this, we fine-tuned the Segment Anything model to detect road regions.

Image Restoration Model

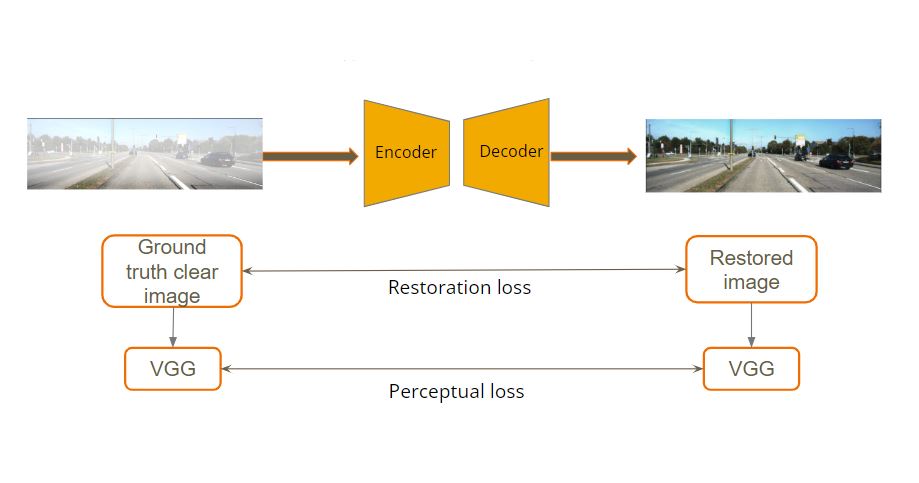

For image restoration we use a single transformer encoder-decoder model based on TransWeather[4] to restore an image degraded by any type of weather condition. The TransWeather model has three parts to it:

- Transformer encoder: A degraded input image is given to a transformer encoder which learns coarse and fine information across each stage.

- The transformer decoder block uses the encoded features as keys and values and uses learnable weather type query embeddings as queries.

- These extracted features are then passed through a convolutional projection block to get a clean image.

The loss used to train this model is a sum of a restoration L1 loss and perceptual loss. The restoration L1 loss makes sure that the model trains to produce restored images as close as clear images and to remove the weather degradations. The perceptual loss is the mean square error between the features extracted from the ground truth clear image and the output restored image.

Experiments with the Restoration Model

- We first replicated the TransWeather model with the available best weights and tested it on a single weather type of fog. This provided good results on synthetic rain data but did not give clear restored images when tested on real world Dawn dataset. This might be due to domain shift. Fine-tuning the model on real-world dataset is the good next option, but this is not possible due to unavailability of clear and degraded image from same scene.

- The next step we took was to generate synthetic weather degraded data that closely match real world data. We now trained the TransWeather model from scratch on our new synthetically generated fog images from BDD100K dataset and tested them on real world datasets such as DAWN dataset, Dashcam videos(collected by our team while driving) and BDD100K test dataset in foggy conditions.

- We performed evaluation of these results by comparing the downstream tasks results on foggy and restored image. For this we used a pre-trained object detector and evaluated its performance on foggy images before and after restoration.

References

[1] Zhang, Zheng, et al. “Scene-free multi-class weather classification on single images.” Neurocomputing

[2] Jeya Maria Jose Valanarasu, Rajeev Yasarla, and Vishal M. Patel. Transweather: Transformer-based restoration of images degraded by adverse weather conditions. CoRR, abs/2111.14813, 2021.

[3] Godard, C., et al. (2019). Digging Into Self-Supervised Monocular Depth Estimation. In Proceedings of the IEEE International Conference on Computer Vision (ICCV)