Limits of Neural Face Rendering

Sponsored by Facebook Reality Lab

Supervised by Dr. Jason Saragih, Prof. Yaser Sheikh and Prof. Ioannis Gkioulekas

Introduction

Photo-realistic human face rendering and reconstruction in essential to real-time telepresence technology that drives modern Virtual Reality applications. Since humans are social animals that have evolved to express and read emotions from subtle changes in facial expressions, tiny artifacts give rise to uncanny valley that could hurt the user experience. Nowadays, many modern 3D telepresence methods leverage deep learning models and neural rendering for high-fidelity reconstruction, and to tackle difficult problems such as novel view synthesis and view-dependent effects modeling. These approaches are usually data-hungry, and the design of a capture system and data collection pipeline would directly determine the performance of those models.

Hence, we are motivated to find optimal multi-veiw camera system for building photo-realistic codec avatar with least amount of data collected required. Optimal can be referred to number of cameras, placing angle of the camera, number of expression required…etc., but in our project we focus on number of cameras. We start with understand generalization ability of model on the given dataset. We present the ablation study of how different model architectures respond to synthesizing viewpoints and expressions. We use a conditional VAE model as our baseline, and evaluate model’s reconstruction quality with respect to the network architectures, which includes spatial bias, texture warp field and residual blocks. Empirically, we find out that baseline model is beneficial from these network architectures on interpolating novel viewpoint while we do not observe the same improvement of model’s performance on generalizing to unseen expression.

Training Pipeline

Our model greatly resembles the Deep Appearance Model, which is a VAE model that takes meshes and average texture as input and decodes the view-dependent textures for rendering. We regress the relative displacement for each vertex in the mesh on the normalized ground-truth data. The input texture is the average texture from each

camera of a certain frame. The decoder is given the camera position from which the face is being viewed, and tries to predict the texture that renders to the ground-truth screen image from that camera view. We use Nvdiffrast for differentiable rendering to propagate gradients from screen images to the predicted textures. The input textures are normalized by the average texture and standard deviation across all expressions and views of the whole dataset, and the model also generates predictions in the normalized texture space. When calculating loss, a mask is provided by the renderer because we only care about the parts that can be covered by the face texture, where the gradients flow. The facial texture weight mask is manually annotated that assigns different weights to different part of the face texture. This is because humans are more sensitive to certain regions such as eyes and mouth, and we want to make the model focus on these parts. The screen-space mask assigns higher weights to eyes and mouth regions when calculating the loss since these parts are more important in human communication. This mask can be acquired by rendering the texture weight mask to the frame being trained on. Formally, the loss function is given by L = λ1 ∥M(v, ˆT) ⊙(R(v, ˆT) −I)∥2 + λ2 ∥ˆG −G ∥2 + λ3KL(N(μz,σz)||N(0,I)), where M and R denotes the

screen masking and rendering functions respectively, v ∈R3 is a camera view vector, ˆT is the predicted texture by decoder, I is the ground-truth screen image, ˆG is the predicted geometry, G is the ground-truth geometry, μz and σz are the mean and variance of the latent distribution. For faster convergence and unequal learning rate, we multiply the output mean of encoder by 0.1 and the log standard deviation by 0.01. We use Adam optimizer and perform 200K iterations for all the experiments. We set λ1 = λ2 = 1 and

λ3 = 0.01.

Model

Our model follows a VAE design, where the encoder consists of 8 convolutional layers, each with kernel size 4 and stride 2, that downsamples the input texture from a resolution of 1024 to 2. For the mesh input, we simply encode it with a multi-layer perceptron (MLP). On the decoder side, the view information is fed into an MLP and the feature is concatenated to the latent code. Thus, the texture decoder can be conditioned on this view information and models view-dependent effects in texture. We explore several different architectures to investigate their generalizing capacity on novel expressions and camera views.

Color Correction: Since different cameras could have different color space, we optimize color correction parameters for each camera. Color correction is performed on the output texture by scaling and adding a bias to each RGB channel. The scaling factors and biases are initialized to 1 and 0, respectively. We fix the color correction parameters of one camera as an anchor and train the other parameters as a part of the model. Applying color correction is necessary, otherwise the reconstruction error will be dominated by the color difference instead of exact colors of the pixels.

Spatial-Bias: For convolutional layers in the decoder for upsampling, instead of adding the same bias value per channel in the feature map, we add a bias tensor that has the sam shape as the feature, meaning that each spatial location has its own bias value. In this way, the model is able to capture more position-specific details in the texture, such as wrinkles and lips.

Warp Field: We can also decode a warp field from the latent space and bilinearly sample the output texture with the warp field. Conceptually, texture generation can be decomposed into 2 steps: a synthesized texture on a deformationfree template followed by a deformation field that introduces shape variability. Denoting by T(p), the value of the synthesized texture at coordinate p = (x,y) and by W(p), the estimated deformation field, we consider that the observed image, I(p), can be reconstructed as follows: I(p) = T(W(p)), namely the image appearance at position p is obtained by looking up the synthesized appearance at position W(p). Technically, we obtain a warp field as Deformable Autoencoders does by integrating both vertically and horizontally on the generated warping grid to avoid flipping of relative pixel positions.

Residual Connection: We can insert residual layers into our network to make it deeper. We investigate whether this increase in model capacity would make it generalize better.

Experiment Setting

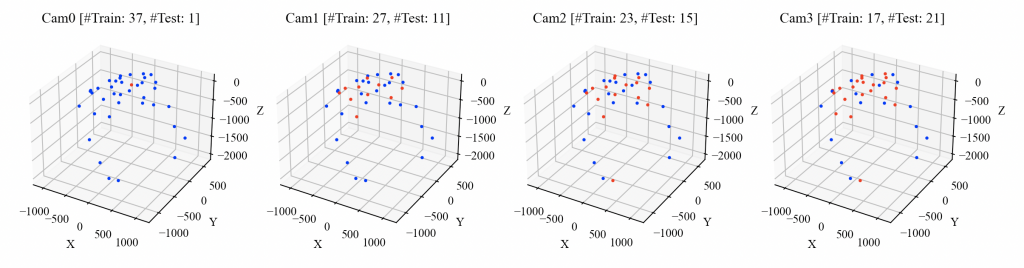

Camera Split We perform ablation study on the captured data on one identity to test different architectures on novel views and expressions. We manually split the testing and training camera sets from the total of 38 cameras such that the testing cameras are approximately equally spaced. There are 4 sets of camera split, cam0, cam1, cam2, and cam3, we used for the ablation stud, where cam0 has the greatest number of training cameras (37) and least number of testing cameras (1), and Cam3 has the least number of training cameras (17) and greatest number of testing cameras (21).

Evaluation

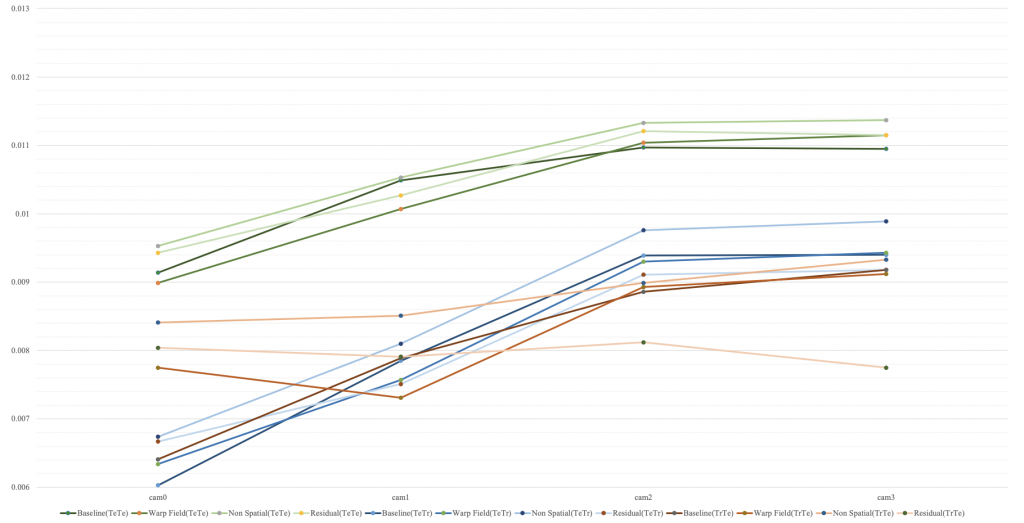

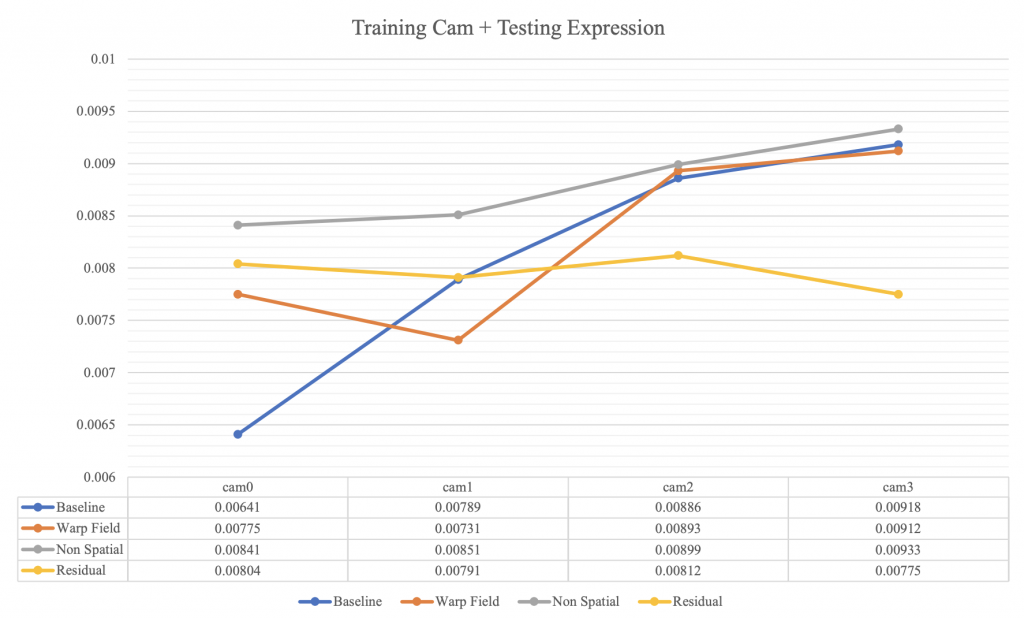

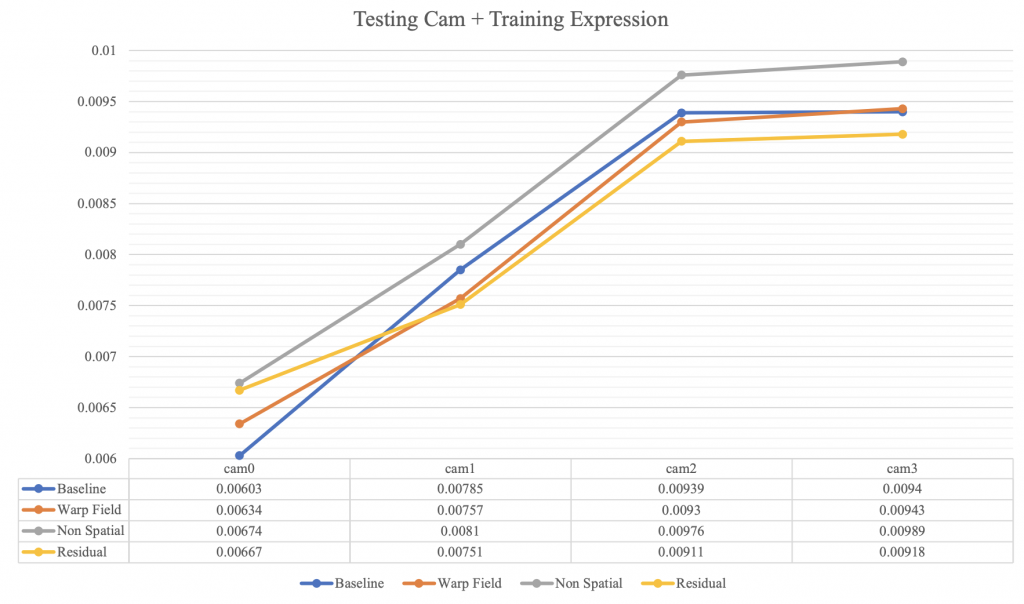

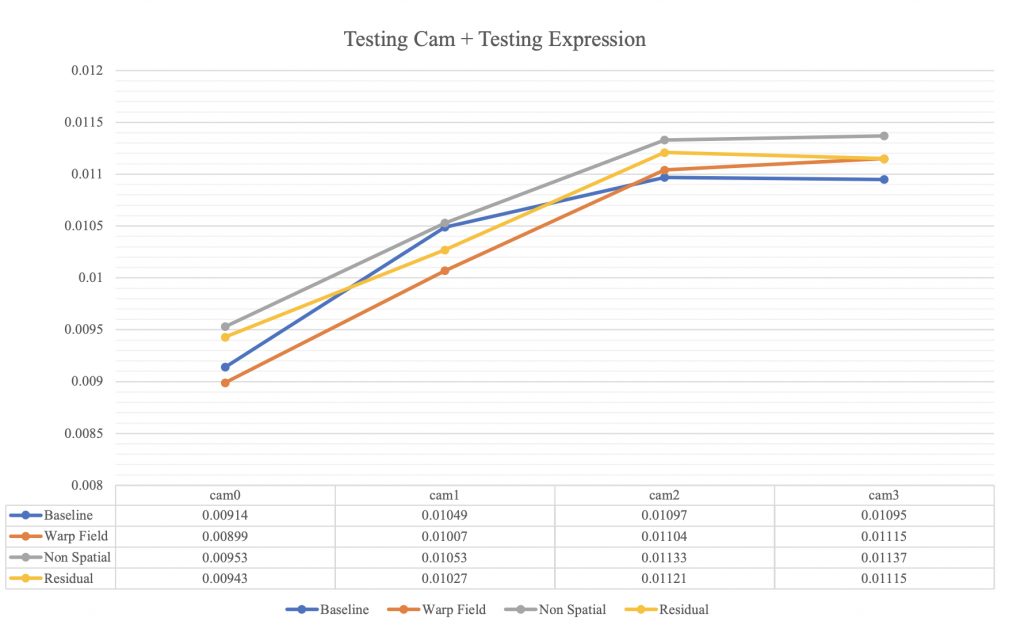

We conducted ablation study on 3 different model architectures: Spatial-Bias, Warp Field and Residual Block. To examine model’s generalization ability, we have 3 sets of evaluation, which will tell us model’s interpolation capacity on either novel viewpoint, or novel expression, or on both novel viewpoint and expression:

1. Testing Camera on Testing Expression (Generalization on Viewpoint + Expression)

2. Testing Camera on Training Expression (Generalization on Viewpoint)

3. Training Camera on Testing Expression (Generalization on Expression)

To achieve fair comparison, we trained color correction parameters for unseen testing camera for two epochs of validation data before evaluation. We also applied encoder fine-tuning on the testing expression as we would like to test the capacity of the conditional decoder. Hence, the encoder need to be able to generate the latent code that is specialized on the given dataset. Implementation-wise, we trained encoder on test data for 10 epochs on given cameras before evaluation.

Result

Training takes around one day for each model using a P3.16x instance with 8 Nvidia V100 GPUs on AWS. 2. matching between number of loss and the image reconstructed quality, so people can get an idea on how good or bad model can generate image based on the loss provided.