Facebook Reality Labs have been working on a system called codec avatars in the past few years towards the goal of photorealistic telepresence.

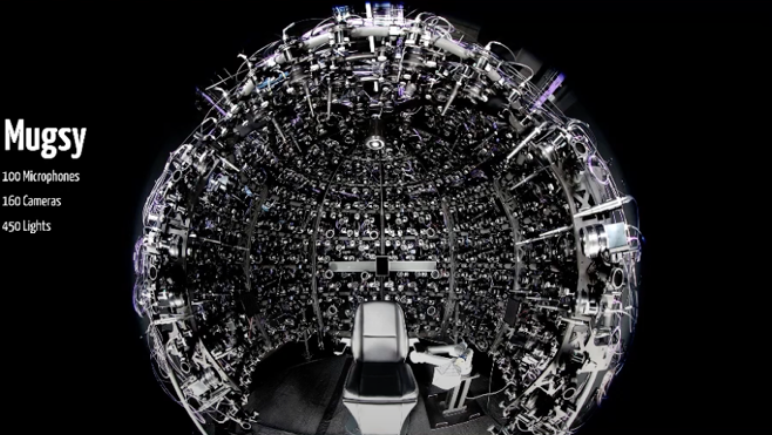

Briefly speaking, the VR system jointly encodes and decodes geometry and view-dependent appearance into a latent code from data captured from a multi-camera rig. The training of this system is fulfilled by minimizing the difference between the rendering and real image pixels. Deep learning is famous for being data-hungry. To feed the beast with enough data, the team build a multi-camera capture system called Mugsy.

Data captured from Mugsy can come from hundreds of cameras and different time instances. Different cameras can have different properties such as lens type, focal length, aperture, and sensor temperature. The purpose of this project is to develop a technique to calibrate the color response of all the cameras at possibly arbitrary time with minimal manual effort.

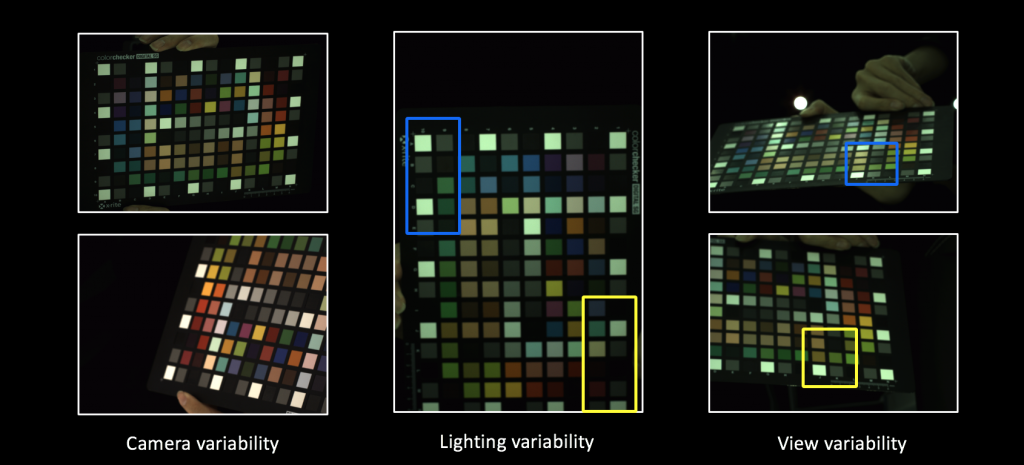

However, doing camera correction for such a huge system is non-trivial. The process of color correction is actually entangled with three variabilities, which might not be correctly handled by existing approaches:

(1) Camera variability: different cameras may sense the same color differently;

(2) Lighting variability: colors may be affected by different illumination conditions;

(3) View variability: when we view an object from different angles, the observed color can be different.

These variabilities pose great challenges to our goal of achieving consistent multiview color correction. Please go check our methodology part to see how we tackle it!