Motivation

We are experiencing an exciting time of generative models. With the appearance and development of diffusion models [1], they are gradually replacing the prevalent generative adversarial network (GAN). Though astonishing and high-quality images are generated, there still leave many serious questions that need to be solved. Among them, fairness is a very crucial and nonnegligible problem. Let’s see an example.

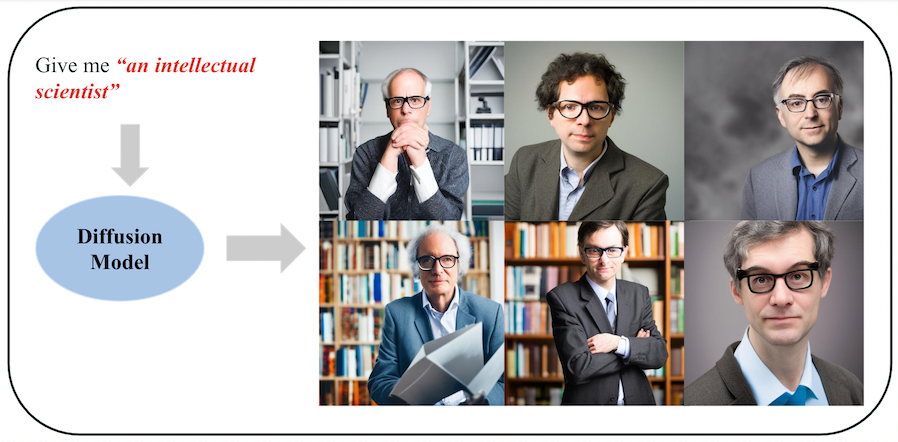

From Figure 1, we can observe that when we ask the stable diffusion model to give me “an intellectual scientist”, all the generated images are men wearing eyeglasses. Generally speaking, we want the generated images to be fair across the sensitive attributes, such as gender, race etc. Besides, we also remove some stereotype such as all scientists wear eyeglasses. Therefore, based on current urgent fairness problems in diffusion models, we want to solve it in this project.

Problem Statement

Because of the imbalance of training dataset (LAION-5B [2]) across different attributes, the biases probably come from it. In order to solve the bias generated from the training dataset, we specifically focus the text-to-image stable diffusion models and define our problem as follow: Given a pre-trained Stable Diffusion model and an user specified text prompt, we want the number of generated images for each subclass across some attribute to be the same.

Some of the straightforward ideas to solve this problems are too difficult to implement, such as rebuilding the whole LAION-5B as a fair dataset, or retrain the Stable Diffusion, which will cost nearly 1 month using 200 GPUs. So, how are we going to solve this problem? Let’s move to the next Method section…

References

- Jonathan Ho, et al. “Denoising Diffusion Probabilistic Models.” NIPS. 2020.

- Schuhmann Christoph, et al. “Laion-5b: An open large-scale dataset for training next generation image-text models” arXiv. 2022