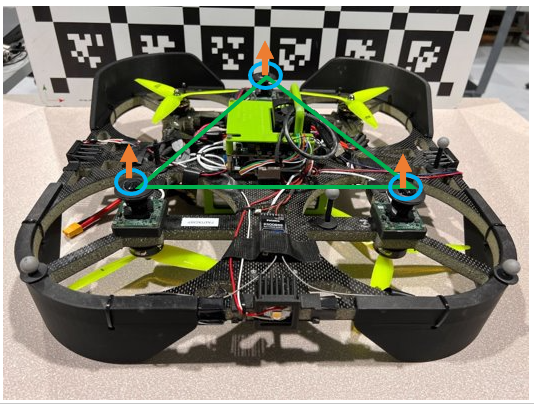

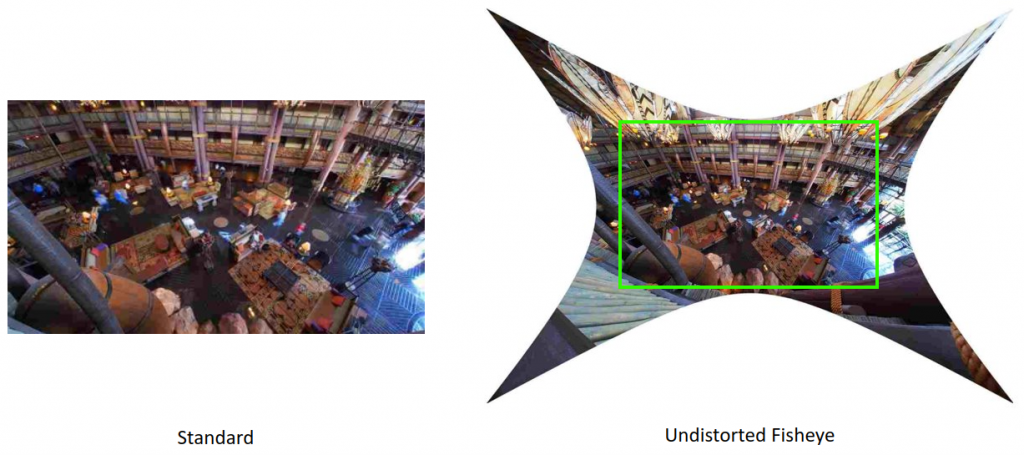

We plan to develop a real-time system deployed on a drone that is capable of estimating the depth of its environment. The drone would have a six fisheye lens camera setup. The six fisheye images would be stitched together to get a panorama image representing the top, down, bottom, left, and right sides of the environment. The model/system would then predict a depth for each pixel of this stitched image.

Holonomic robots such as drones have six degrees of freedom and thus, can move in all directions. Human drivers, too, rely on a wider field of view rather than just looking forward to making decisions. In the case of autonomous vehicles and self-driving cars, a LIDAR helps overcome this. However, we want a LIDAR-free system.

Besides being costly, LIDARs are significantly heavy. While in a self-driving car scenario, this doesn’t matter much, in the case of a drone, this would constitute a majority of its payload. Similarly, with a limited power capacity, a LIDAR would end up consuming most of the power on a drone.

The point cloud produced by a LIDAR gets sparser as the distance increases. Considering the applications of a drone, the distances of the environment, especially top and bottom, could be significantly large. Thus in such cases, the sparse point cloud doesn’t help much. Besides this, LIDAR performs poorly in dusty and rainy conditions.

Considering these are to be used with a drone, all the LiDAR related issues are doubled as at least 2 such sensors are needed.

We also plan to use fisheye images as these capture more information due to their wider field of view.

Previous works in multiview stereo either compromise the accuracy or the real-time performance. That is, they are either heavily reliant on powerful GPUs or use some non-learning layers leading to a drop in accuracy.