Project Summary

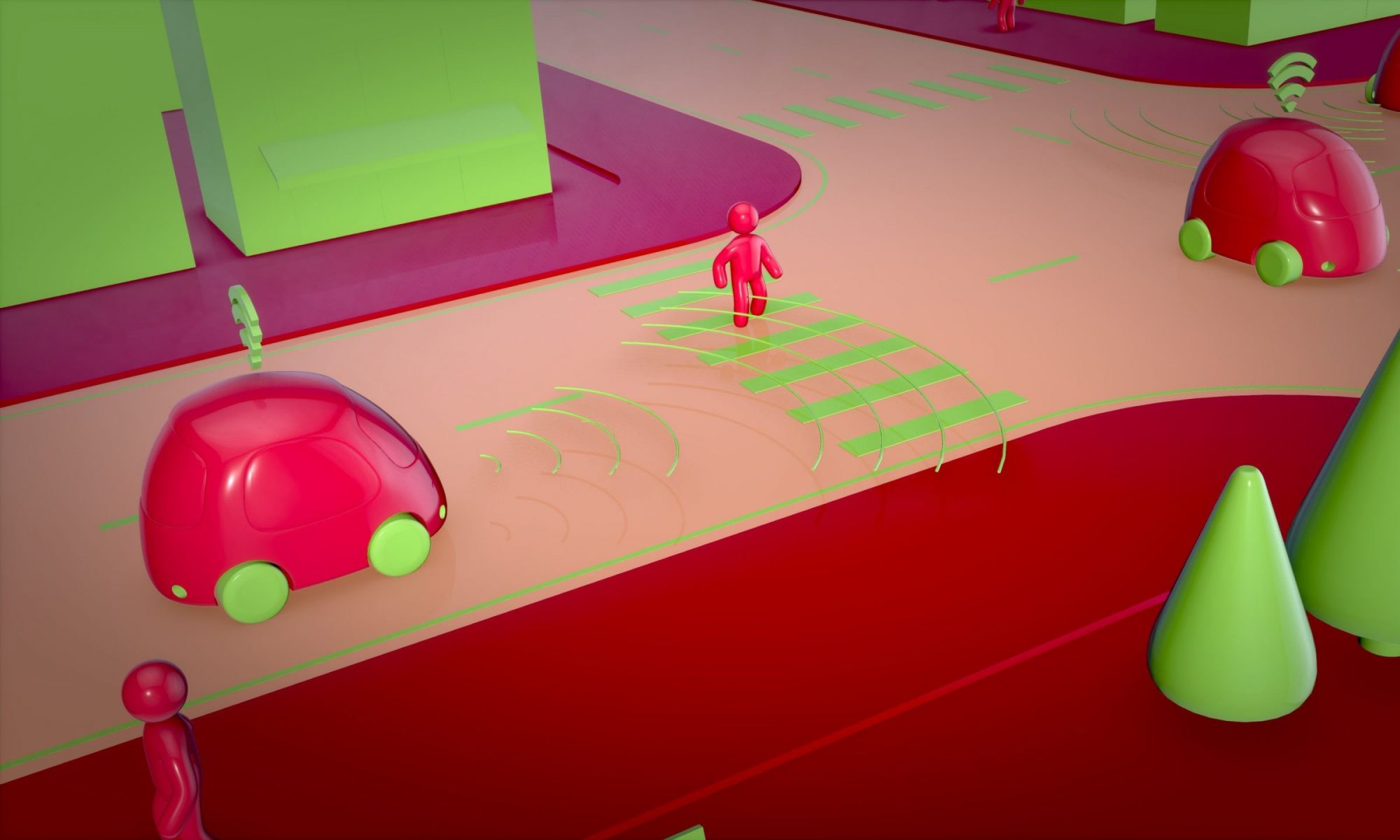

Improve Reinforcement Learning (RL) based self-driving algorithms by adding semantic-segmented bird’s-eye-view (BEV) images to the state space.

Motivation

Current RL algorithms used to learn autonomous navigation at Auton Lab uses Carla simulator as the source of training and testing data. The algorithms utilize the Ground truth information like distance to nearest vehicle, state of traffic lights and deviation from the planned trajectory among others to learn how to drive. Such information is hard to come by in the real world.

We hope to bring in more visual information from which such state cues can be inferred rather than measured, and hence bring autonomous driving closer to the real world.

Problem

We plan to integrate semantic segmented images from a bird’s eye view camera position into the state space as an alternative to the GT sensor state space currently used.

Solution

We use a multi-headed current frame stack encoder and future frame stack predictor to create a latent embedding of a temporal sequence which will help the RL model make better decisions.