Overview

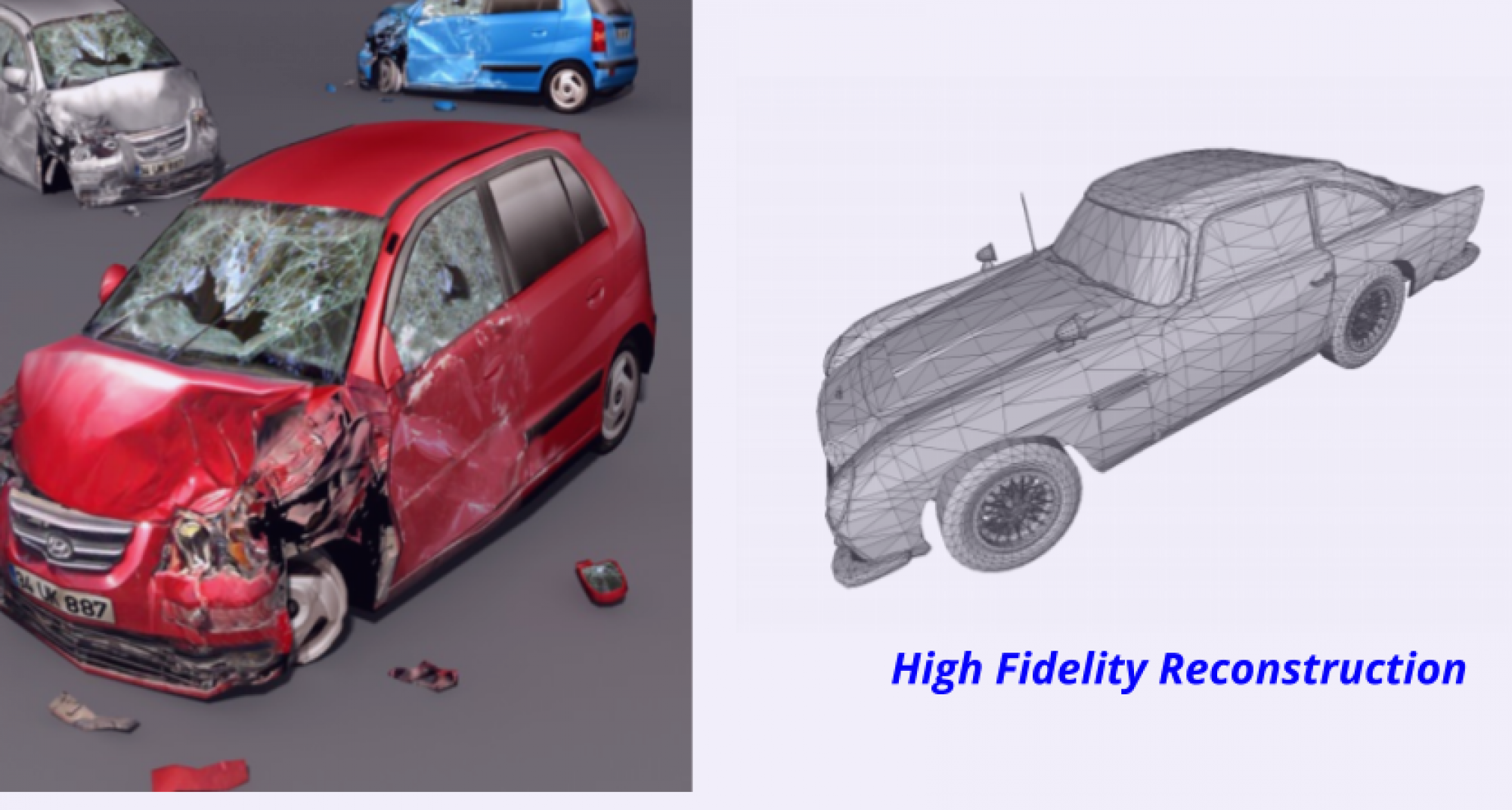

The primary goal of this project is to achieve high-fidelity 3D reconstruction by learning implicit representations. In contrast to the existing literature, we aim to design a method that can be used to learn these representations for any arbitrary shape, be it open/closed, or shapes which have ill-defined genus. The applications of this method include high-fidelity reconstruction of damaged vehicles for insurance purposes, and automatic computation of building roof surface area via 3D reconstruction from aerial imagery.

Project is Sponsored by Verisk Analytics and advised by Prof. Laszlo Jeni at CMU and Dr. Maneesh Singh from Verisk.

Approach

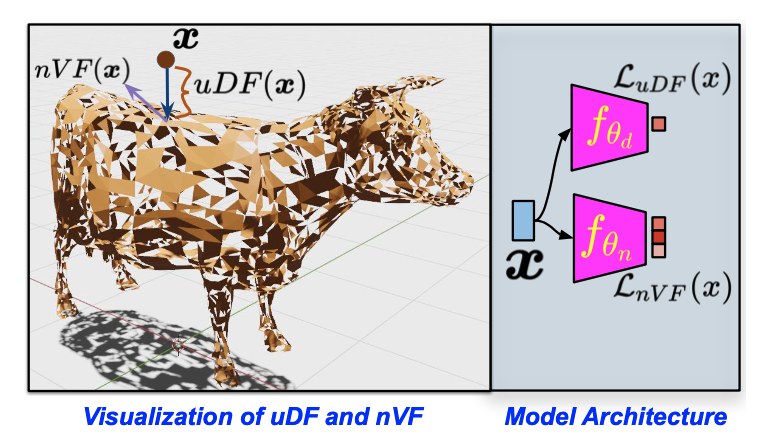

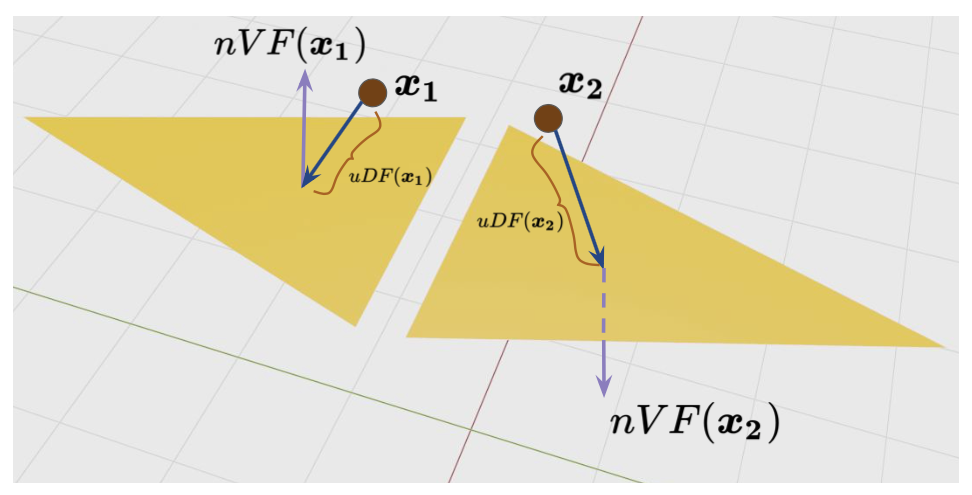

We disentangle the implicit representation of a shape into an unsigned distance field uDF and a normal vector field nVF. This decomposition enables us to represent both open and closed shapes with arbitrary topology, which, to the best of our knowledge, is a significant improvement over existing methods. We aim to model these functions using feed-forward networks with non-linear activation functions.

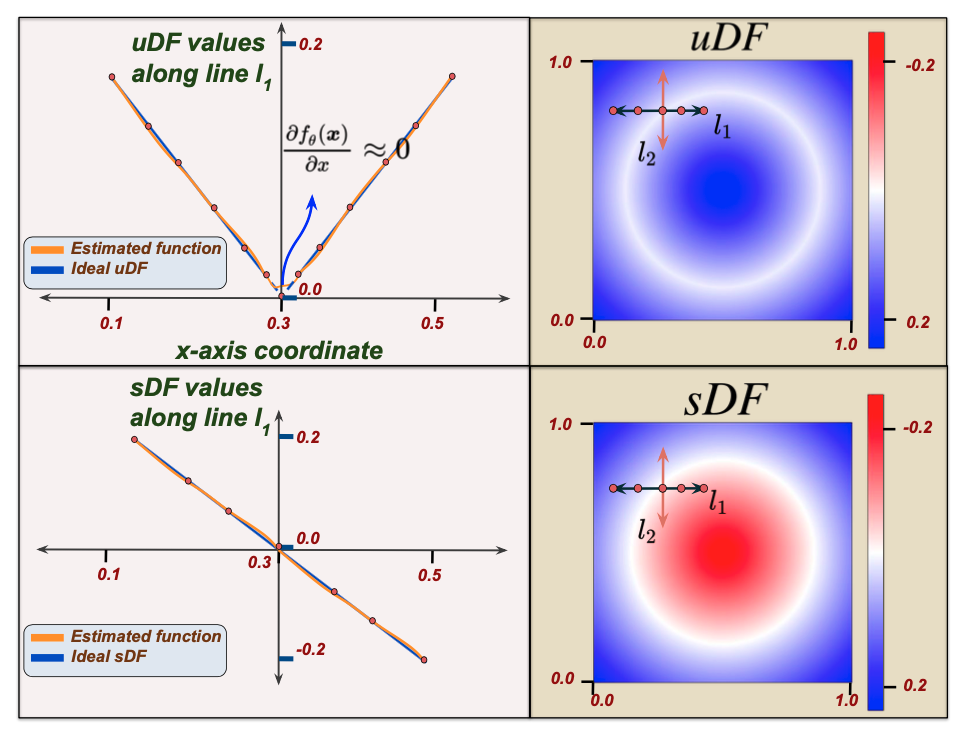

We begin with a formal definition of a uDF: it’s a function that outputs the closest unsigned distance to the surface from any given point in 3D space. Note that this is in contrast to an sDF which is meant to output negative distances inside the shape and positive distances outside. Several notable current approaches for representing shapes, thus bound to the assumption that the underlying surface is watertight. We argue that an sDF can inherently describe only closed shapes. To relax this assumption, we model a 3D shape using a uDF which can represent both watertight and non-watertight shapes equally well.

As can be noted, trivially removing the sign of the sDF leads to a uDF. However, this sacrifices some important properties of the sDF leading to a new set of challenges which we need to address. (1) The uDF is non-differentiable at the surface (see Figure 2) implying it can’t be reliably trained using points sampled from the surface. We overcome this by never actually sampling the training data points on the surface but slightly away from the surface; (2) Unlike sDFs, we cannot reliably extract the surface normal from a uDF due to its non-differentiability (See Figure 2). Since estimation of high quality surface normals is important for several downstream tasks, we propose to learn a normal vector field (nVF), which, for any 3D location, , represents the nVF normal to the surface point closest to

. Formally,

Given a 3D shape represented by the noisy triangle soup, we construct training samples, , which contain a point,

, the uDF and the nVF evaluated at

We first densely sample a set of {points, surface normal} pairs from the triangle soup, by uniformly sampling points on each triangle face. Let’s call this set of points $ latex \mathcal{X}= {(\boldsymbol{x_s}, \boldsymbol{v_s})}$. Since each point is sampled from a triangle face, the normal to the triangle face provides the associated surface normal for that point.

Given this set $ latex \mathcal{X}$, the set is constructed by sampling points

in 3D space and finding the nearest corresponding point in

to construct the training sample

. The set

is used train the DNNs to approximate the uDF and the nVF. More concretely, we train the DNN

to approximate uDF, and DNN

to approximate nVF.

Before we describe how is trained, please note that uDF naturally correspond to unoriented surfaces (which are also logically necessitated by open surfaces). However, for most ray-casting applications this is not an issue as the direction of the first intersected surface can be chosen based on the direction of the ray. So, the ambiguity of

or

can be handled. This implies a

representation in the DNN suffices. However, such a representation needs to be learnt from a noisy triangle soup with oriented surface normals with possible directional incoherence (in the

sense) between adjacent triangles (See Figure 3). To allow for this, we optimize the minimum of the two possible losses, computed from each

or

. More concretely,

This allows for the network to learn surface normals . The incoherence in the noisy triangle soup is handled by the continuity property of the DNNs and, practically, coherent normal fields are learnt as verified in our experiments. Thus, after training, the zero-level set of

which approximates the uDF, represents points on the surface, while

approximating nVF, represents the surface normals of the corresponding points on this level set.

Sphere Tracing uDFs

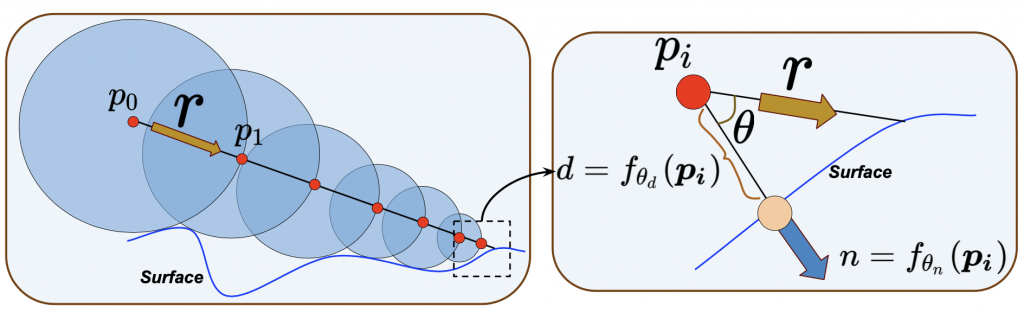

Sphere tracing is a standard technique to render images from a distance field that represents the shape. To create an image, rays are cast from the focal point of the camera, and their intersection with the scene is computed using sphere tracing. Roughly speaking, irradiance/ radiance computations are performed at the point of intersection to obtain the color of the pixel for that ray.

The sphere tracing process can be described as follows: given a ray, , originating at point,

, iterative marching along the ray is performed to obtain its intersection with the surface. In the first iteration, this translates to taking a step along the ray with a step size of

to obtain the next point

. Since

is the smallest distance to the surface, the line segment

of the ray is guaranteed not to intersect the surface (

can touch but not transcend the surface). The above step is iterated

times till

is

close to the surface. The i-th iteration is given by

and the stopping criteria

.

Note that for uDF, the above procedure can be used to get close to the surface but doesn’t obtain a point on the surface. One we are close enough to the surface, we can use a local planarity assumption (without loss of generalization) to obtain the intersection estimate. This is illustrated in Figure 4 and is obtained in the following manner: if we stop the sphere tracing of the uDF at a point , we evaluate the nVF as

, and compute the cosine of the angle between the nVF and the ray direction. The estimate is then obtained as

.

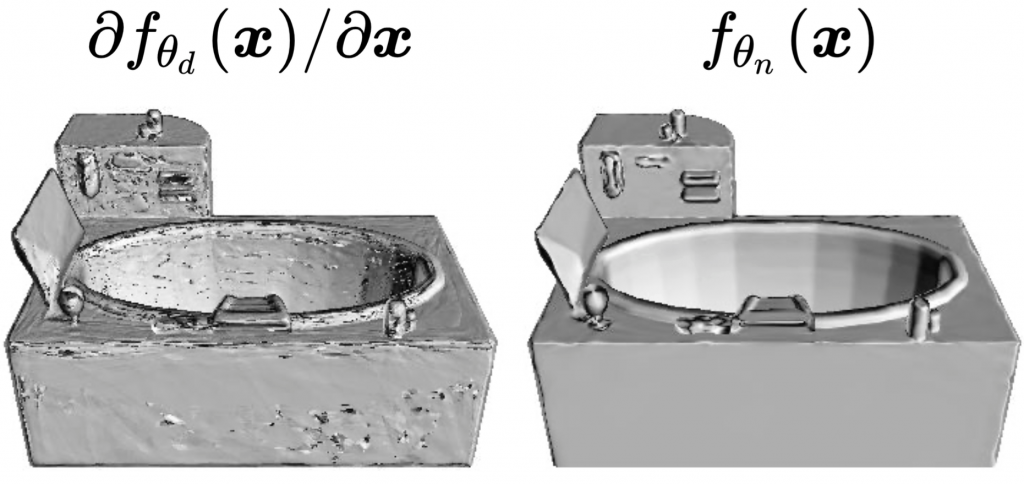

Benefit of using nVFs

In Figure 5, we visualize ray casting using normals obtained by differentiating the uDF (Left) and ray casting using the surface normals estimated by the nVF (Right). This high-quality rendering empirically validates the need for learning an nVF alongside a uDF.