PART1: SLAM in Dynamic Environments (SPRING 2019)

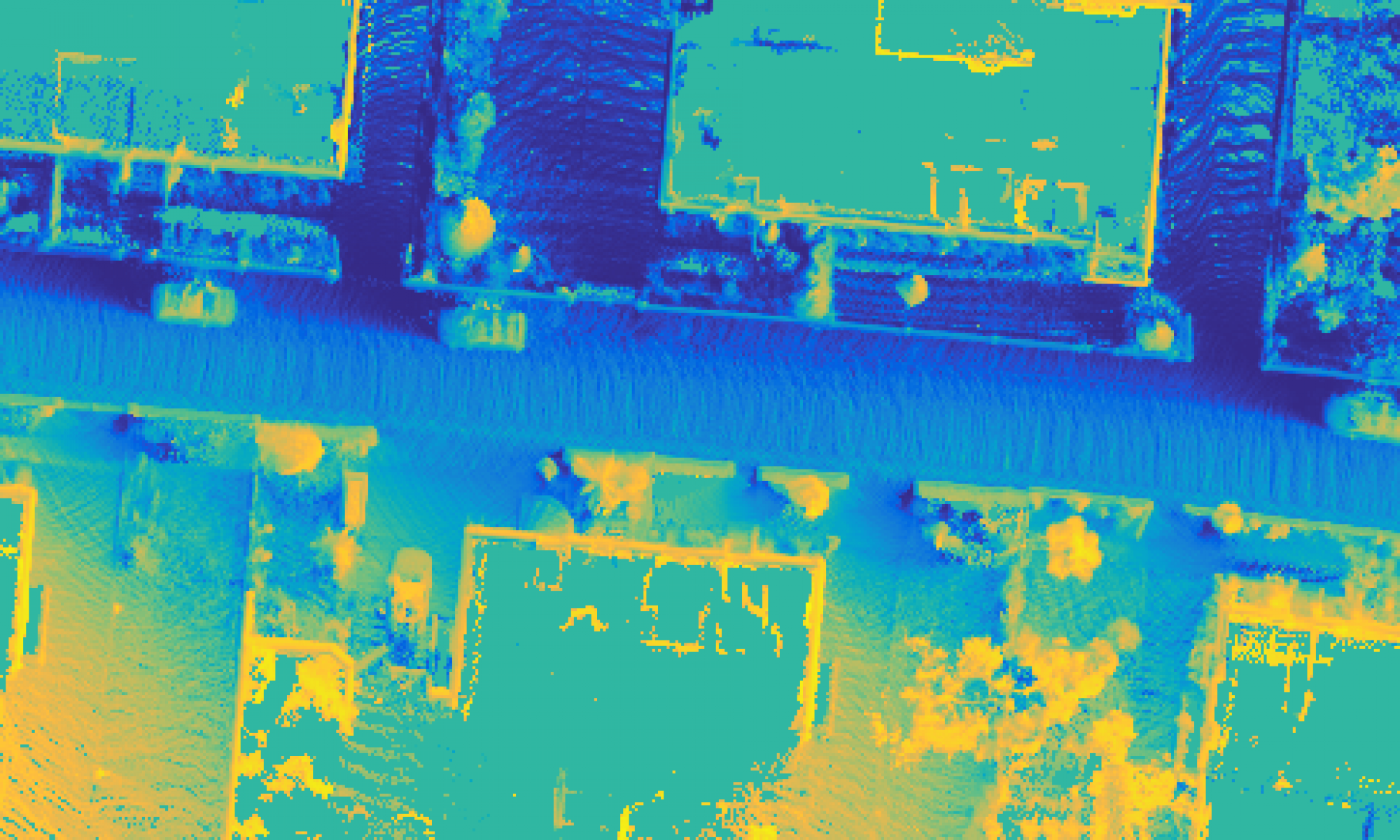

SLAM (Simultaneous Localization And Mapping) is a computational problem of constructing or updating a map of an unknown environment while simultaneously keeping track of an agent’s location within it. This has been widely applied in autonomous driving, drones, planetary rovers, mobile robots, etc. When a mobile robot is running, it is helpful to use a SLAM system to understand its surrounding environment. SLAM system relies on static feature in the scene to reliably localize. And our project is focused on how to reduce the effect of dynamic object in the scene.

Motivation

Mobile robots use SLAM system to better understand environment

Problem

Dynamic objects often disrupt camera tracking and mapping

Solution

Filter out the dynamic features and only rely on static features

Image source: Bescos, Berta, et al. “DynaSLAM: Tracking, Mapping, and Inpainting in Dynamic Scenes.” IEEE Robotics and Automation Letters 3.4 (2018): 4076-4083.

PART2: Illumination Invariant Cross-spectral Feature Matching (FALL 2019)

When a robot performing visual SLAM in a scene, one thing that can keep changing is the illumination condition. In some cases, the change in illumination is severe and in order to reliably localize during this change, a robust feature that is invariant to non-linear intensity variations is required. This type of feature has many real-world applications such as re-localization across day-night cycles, cross-spectral registration, etc. Our project is focused on how to develop such feature with non-learning method.

Motivation

Indoor robot localization with day-night cycles/across different illuminations

Problem

Different illumination/different spectral cause non-linear intensity changes and make feature matching hard

Solution

Develop a pose invariant feature that is fast, and robust to changes in illumination