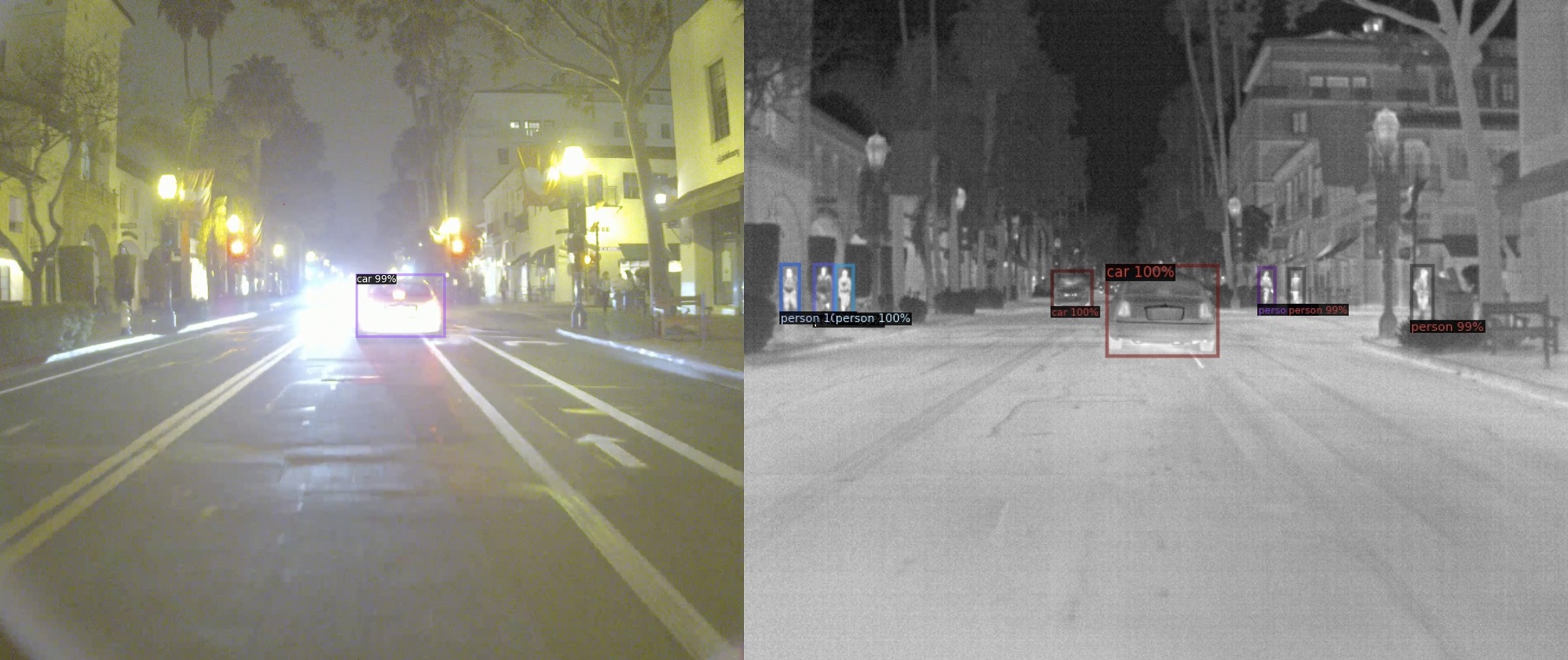

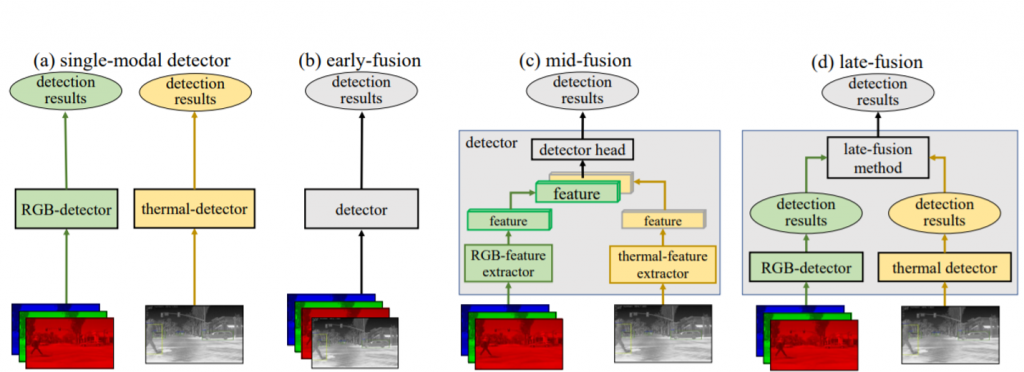

We explored different fusion strategies in RGB-thermal image pairs, including single modality, early fusion, middle fusion and late fusion. Here is the design of different strategies:

(a)Single-modal detector

We separately trained one detector for RGB, another for thermal. (for KAIST dataset. For FLIR, since there are no RGB annotations, we trained thermal only.)

(b)Early fusion

We directly concatenated RGB and thermal into 4 channels as input to the model, and trained the detector.

(c)Middle fusion

We trained two streams of feature extractor for RGB and thermal imagery, then concatenated the two features before region proposal network (RPN) and ROI heads.

(d)Late fusion

We proposed Bayesian late fusion to fuse the detection results from two independent modalities.

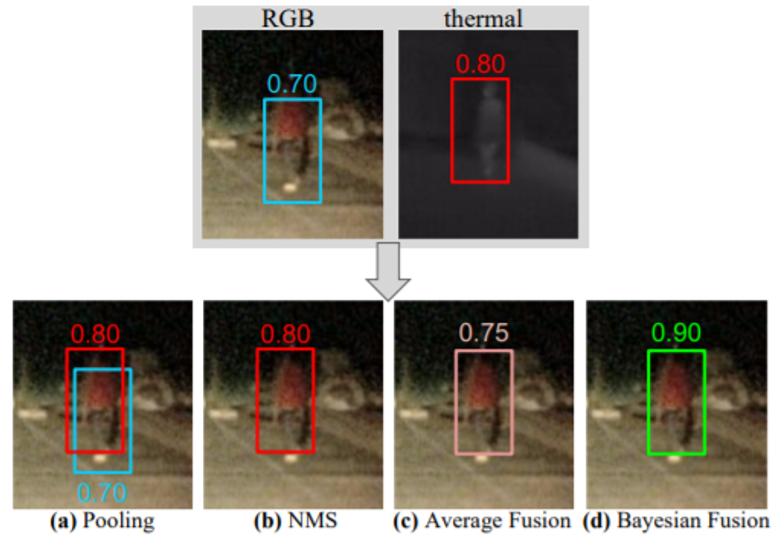

Suppose there are two detection results from RGB and thermal detectors. We can fuse the two detection results according to the following methods:

(a)Pooling

Directly pooling all the results from the two modalities. However, this results in multiple detections overlapping the same ground-truth object

(b)Non-maximum suppression (NMS)

Find overlapping boxes, and assign higher scores to it as final output. However, NMS fails to “fuse” information from multiple modalities together, since each of the final detections are supported by only one modality and fail to incorporate the lower score.

(c)Average fusion

A straightforward way is to modify NMS is to average the scores of overlapping detections from different modalities. Yet, averaging scores will always decrease the higher score compared with NMS.

(d)Late fusion

●Assume conditional independence in the two modalities:

p(x1, x2 | y) = p(x1|y)p(x2|y)

where x1 is RGB modality, x2 is thermal modality, y is class label

●Bayes rule:

p(y|x1, x2) = p(x1, x2|y) p(y) / p(x1, x2)

●Multi-modal posterior

log p(y|x1, x2) = log p(y|x1) + log p(y|x2) – log p(y) – constant